Claude Extension Flaw Enabled Zero-Click XSS Prompt Injection via Any Website

Foto: The Hacker News

A critical security vulnerability in the Claude browser extension, discovered by researcher Johann Rehberger, allowed for user account takeover via a Zero-Click XSS Prompt Injection attack. It was sufficient for a person using the official Anthropic add-on to visit a specially crafted website for malicious code hidden in the page content to automatically "instruct" the AI model to send conversation history to an external attacker-controlled server. The issue stemmed from the fact that the extension had permissions to read the content of every visited tab, which, combined with a Cross-Site Scripting (XSS) vulnerability, paved the way for the theft of sensitive data without any interaction from the victim. For the global community of creators and professionals using AI assistants, this is a clear signal that integrating Large Language Models directly into the browser carries new, specific risks. Although Anthropic responded quickly and implemented fixes, this incident underscores the necessity of applying Zero Trust principles even toward tools from reputable manufacturers. Users must be aware that data processed by AI in real-time while browsing the web can become a target for automated Prompt Injection attacks. Security in the era of generative artificial intelligence today requires not only strong passwords but, above all, rigorous permission isolation for applications that have insight into our digital lives.

The security of artificial intelligence systems has ceased to be the domain of theoretical considerations about "rogue algorithms" and has become a real battlefield against classic web vulnerabilities. The latest discovery by researchers from Koi Security sheds light on a critical flaw in the official Claude Google Chrome Extension from Anthropic. This vulnerability allowed for a Zero-Click XSS Prompt Injection attack, which in practice meant that a user could fall victim to AI model manipulation without performing any action other than simply visiting an infected website.

The scale of the problem is significant because browser extensions for LLMs (Large Language Models) are designed to facilitate interaction with website content, which requires high permissions to read and modify data within the browser. In the case of Claude, a design flaw allowed attackers to "inject" malicious instructions directly into the assistant, bypassing authorization mechanisms and user interaction. Researcher Oren Yomtov, who revealed the details of the report, points to the unprecedented ease with which any website could take control of an AI session.

The mechanism of a zero-click attack

Traditional Cross-Site Scripting (XSS) attacks usually require a flaw in input data validation that allows a script to be executed in the context of another site. In the case of the Claude extension, cybercriminals found a way to exploit a vulnerability to silently send commands to the model. As Oren Yomtov noted, the system allowed any site to "silently inject prompts into the assistant as if the user had written them themselves." This eliminates the barrier usually represented by the need for human interaction with the AI interface.

Read also

A key aspect of this vulnerability is the fact that it occurs in a Zero-Click model. The user does not have to approve the sending of a query or click on suspicious links within the chat. It is enough for a page containing malicious code to be open in one of the browser tabs, and the Claude extension will treat the instructions contained therein as legitimate commands from the account owner. This opens the way for data theft, phishing, or manipulating the assistant's results in a way that is unnoticeable to the victim.

The problem of Prompt Injection combined with XSS creates a dangerous synergy. While standard command injection is often limited to changing the model's behavior (e.g., forcing it to ignore previous instructions), exploiting a vulnerability in a browser extension allows for interaction with the entire ecosystem of user data that Claude has access to. This can include conversation history, data from other open tabs, or confidential documents analyzed by the AI.

Security architecture vs. modern threats

The vulnerability in the Anthropic tool highlights a broader industry problem: the pursuit of utility often outpaces rigorous Zero Trust security testing. In an era of access modernization and the elimination of lateral movement in networks, AI extensions are becoming a new, poorly protected entry point. Instead of a classic attack on infrastructure, an attacker targets the intermediary layer between the user and the language model.

Modern security approaches, such as Comprehensive ZTNA (Zero Trust Network Access), assume that no connection or user is trusted by default. Unfortunately, browser extensions often operate on a model of full trust in content rendered in Google Chrome. If an extension can read page content to help summarize it, there must be a tight barrier separating passive data from active instructions controlling the model. In the Claude Extension, this barrier proved to be leaky.

- Zero-Click Vulnerability: No requirement for user interaction.

- Cross-Site Scripting (XSS): Use of web scripts to manipulate the extension.

- Prompt Injection: The ability to force the AI to execute any command.

- Silent Execution: The attack takes place in the background, often without a trace in the user interface.

For organizations implementing AI tools, this incident is a warning sign. Traditional endpoint security methods, such as replacing VPN with more modern ZTNA solutions, are necessary but insufficient if vulnerabilities exist in the application layer that employees use daily to process company data. Directly connecting users to applications must go hand in hand with isolating operational contexts within the browser itself.

A new paradigm for AI assistant protection

The detection of the flaw by Koi Security forced Anthropic to react quickly and patch the bug in the Claude Google Chrome Extension. However, the mere existence of such a vulnerability in a product from one of the leading companies in the AI sector suggests that we are facing a new class of threats. XSS attacks are not new, but their application to "hacking" the thought processes of LLM models changes the game. An attacker no longer needs to steal session cookies if they can simply tell the AI assistant to send a summary of a confidential document to an external server.

The long-term solution is not just patching individual bugs, but changing the architecture of extensions. They must operate in an environment with the lowest possible permissions (Principle of Least Privilege), where every attempt to send a prompt originating from an external site is treated as a potential threat and requires explicit Human-in-the-loop authorization. Without this, AI assistants, instead of increasing productivity, will become the weakest link in the digital security chain.

It can be predicted that in the near future, we will see a professionalization of prompt auditing tools. Since Zero-Click XSS allowed for such deep interference, developers will have to implement filtering layers that distinguish user intent from encoded instructions hidden in the HTML code of pages. AI security is moving from the server room directly to the user's browser, making digital hygiene and the choice of proven tools more important than ever before.

More from Security

$285 Million Drift Hack Traced to Six-Month DPRK Social Engineering Operation

36 Malicious npm Packages Exploited Redis, PostgreSQL to Deploy Persistent Implants

Fortinet Patches Actively Exploited CVE-2026-35616 in FortiClient EMS

China-Linked TA416 Targets European Governments with PlugX and OAuth-Based Phishing

Related Articles

How LiteLLM Turned Developer Machines Into Credential Vaults for Attackers

Apr 6

Qilin and Warlock Ransomware Use Vulnerable Drivers to Disable 300+ EDR Tools

Apr 6

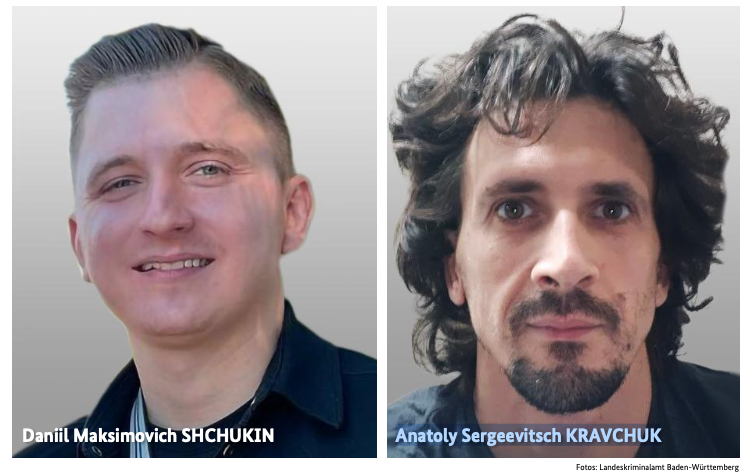

BKA Identifies REvil Leaders Behind 130 German Ransomware Attacks

Apr 6