How LiteLLM Turned Developer Machines Into Credential Vaults for Attackers

Foto: The Hacker News

A critical vulnerability in the popular LiteLLM tool, used to unify APIs of various language models, could have turned developers' workstations into open vaults of credentials. According to the Zscaler ThreatLabz 2024 VPN Risk Report, a Server-Side Request Forgery (SSRF) flaw allowed attackers to remotely hijack API keys for services such as OpenAI, Anthropic, and Google Cloud Vertex AI. In an era of mass AI deployment, where developers handle dozens of sensitive tokens, this vulnerability became the shortest path to a full compromise of an organization's cloud infrastructure. The report highlights an alarming trend: artificial intelligence has drastically shortened human reaction time to incidents, rendering traditional VPN systems obsolete and vulnerable to lateral movement attacks. For users and companies utilizing open-source libraries to manage LLMs, this necessitates an immediate verification of network permissions and a transition to a Zero Trust Exchange architecture. Instead of relying on perimeter security, creative and technical teams must isolate AI processes from the rest of the system, as a single proxy configuration error can result in an uncontrolled leak of budgets and training data into the hands of cybercriminals. AI security does not end with the model itself; it begins with the integrity of the tools that support it.

In today's technological ecosystem, it is not the production servers but the developers' workstations that have become the most active elements of corporate infrastructure. A developer's laptop is the place where credentials are generated, tested, cached, and copied between services, bots, and build tools. In March 2026, the hacking group TeamPCP proved that these machines currently represent the weakest link in the supply chain, utilizing the popular LiteLLM tool to transform personal computers into open vaults of authentication data.

The attack carried out by TeamPCP shed new light on the threats emerging from local AI agents and intermediary tools accessing language models. According to the latest Zscaler ThreatLabz 2026 VPN Risk Report, prepared in collaboration with Cybersecurity Insiders, artificial intelligence has drastically shortened human response time to security incidents. What used to take attackers days now happens in milliseconds, turning remote access into the fastest path to a total breach of an organization's security.

Vulnerability architecture in the AI ecosystem

The LiteLLM tool gained immense popularity by simplifying integration with dozens of different AI model providers through a unified OpenAI format. However, what was meant to be a convenience for programmers became a supply chain attack vector. Developers, striving for maximum efficiency, often store API keys for services such as Anthropic, OpenAI, or Google Vertex AI directly in the environment variables of their local machines. TeamPCP precisely struck this touchpoint by infecting the dependencies that local AI agent instances relied upon.

Read also

When a developer runs a local AI agent, the process often possesses broad permissions to read configuration files and browser cache. Using LiteLLM as an entry point, attackers were able to steal not only API keys but also VPN session tokens and credentials for CI/CD systems. The scale of this phenomenon shows that the traditional approach to endpoint security is completely unprepared for the dynamics of LLM-driven tools.

- API keys in memory: AI agents often keep unencrypted credentials in RAM, making them easy to extract.

- Automated exfiltration: TeamPCP scripts were able to identify the most valuable tokens in real-time and send them to C2 servers.

- Trust in local processes: EDR systems often ignore the activity of developer tools, considering them safe "work environments."

The end of the era of secure remote access

The Zscaler ThreatLabz analysis points to an alarming trend: AI has eliminated the margin of error that previously allowed SOC (Security Operations Center) teams to intervene effectively. In the case of the LiteLLM attack, the infection and identity theft process occurred almost immediately after the infected module was launched. Traditional VPN solutions, instead of protecting company resources, became a highway for attackers who, possessing hijacked credentials from a developer's machine, logged into the network as authorized users.

The problem is compounded by the fact that modern AI tools require a vast number of external libraries to function. Each of them is a potential entry point. TeamPCP did not have to break through complex firewalls; it was enough for their malicious code to find its way into one of the updates of auxiliary scripts used by the LiteLLM community. This is a classic example of poisoning the well, where one infected element infects thousands of developers worldwide.

It is worth noting the specifics of working with local AI agents. These autonomous programs are designed to perform operations on behalf of the user—writing code, calling system functions, or communicating with external databases. If such an agent is compromised at the source (through a library such as LiteLLM), it becomes the perfect "Trojan horse" that already has all the necessary permissions to navigate the company's infrastructure.

A new paradigm: From trust to isolation

The incident from March 2026 forces the industry to redefine the concept of a "secure workstation." Since developers must use AI tools, and these inherently carry the risk of a supply chain attack, the only solution seems to be the total isolation of runtime environments. Relying on the programmer to independently ensure the security of their credentials has proven to be a flawed and costly strategy.

"The developer's laptop has ceased to be a work tool and has become the most valuable strategic target. Whoever controls the Python environment on an engineer's machine controls the keys to the entire corporation's kingdom."

The conclusions from the Zscaler report are clear: organizations must move away from a VPN-based model in favor of a Zero Trust architecture that verifies every process, not just the user. In a world where AI can exploit a stolen token in a fraction of a second, static security methods are useless. It is necessary to implement dynamic monitoring systems that can detect anomalies in the behavior of tools like LiteLLM before data leaves the local machine.

The TeamPCP attack is just the beginning of a new wave of threats in which AI is not only the target but also the catalyst for breaches. Developers, as the creators of these technologies, paradoxically become their first victims if they do not change their habits regarding digital hygiene and secret management. The era of "trusted" local machines has definitively come to an end; the future belongs to micro-segmentation and the full isolation of creative processes from critical network resources.

More from Security

36 Malicious npm Packages Exploited Redis, PostgreSQL to Deploy Persistent Implants

Fortinet Patches Actively Exploited CVE-2026-35616 in FortiClient EMS

China-Linked TA416 Targets European Governments with PlugX and OAuth-Based Phishing

Microsoft Details Cookie-Controlled PHP Web Shells Persisting via Cron on Linux Servers

Related Articles

Qilin and Warlock Ransomware Use Vulnerable Drivers to Disable 300+ EDR Tools

Apr 6

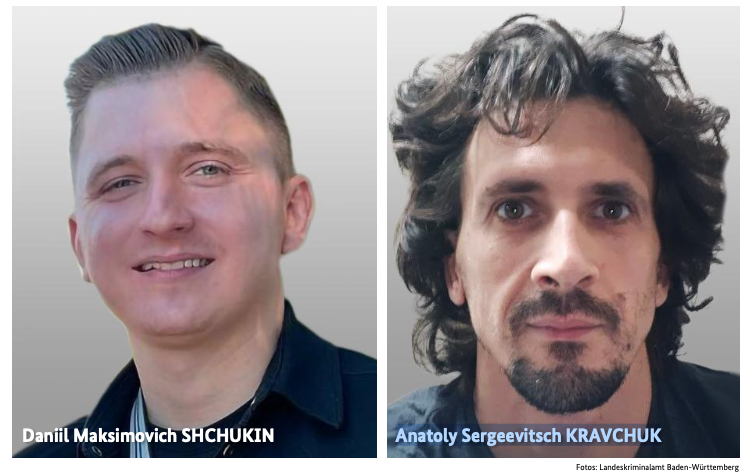

BKA Identifies REvil Leaders Behind 130 German Ransomware Attacks

Apr 6

Germany Doxes “UNKN,” Head of RU Ransomware Gangs REvil, GandCrab

Apr 6