Anthropic struggling with Chinese competition, its own safety obsession

Foto: The Register

A mere 13.3% market share—this is the level to which Anthropic’s influence fell in March 2026, losing more than half of its position in just twelve months. Despite raising $30 billion in funding, the creators of the Claude models are facing a brutal financial reality: with revenues of approximately $5 billion, training and inference costs alone consumed as much as $10 billion. This disparity casts a shadow over plans for an initial public offering (IPO) scheduled for the fourth quarter of 2026. The primary threat has proven to be the offensive from Chinese AI laboratories. In the popularity rankings of the OpenRouter platform, models from China, such as DeepSeek and MiniMax, currently occupy the entire podium, pushing Claude Opus 4.6 down to a distant seventh place. For global users, the economic calculation is key: Chinese competition offers 90% of the quality of the Opus model for just 7% of its price. Anthropic further limits the utility of its tools through restrictive token limits during peak hours and a strong obsession with safety, which in the eyes of many developers makes the models less flexible. This situation demonstrates that an ethical approach and advanced safeguards can become a business burden when facing aggressive, low-cost competition. Without systemic solutions to protect intellectual property, pioneers of safe artificial intelligence risk losing financial liquidity to more economically efficient rivals.

In the world of artificial intelligence, where capital flows in streams and ambitions reach for the colonization of digital intellect, **Anthropic** seemed to be a bastion of ethics and safety. However, the latest financial and market data paint a picture of a company falling into the trap of its own principles. While the creators of the **Claude** model prepare for an IPO planned for the **fourth quarter of 2026**, problems are mounting on the horizon that ideology of "responsible AI" alone cannot solve.

The company's financial situation is far from ideal, as revealed by CFO **Krishna Rao** in a recent legal filing. Despite raising a staggering **$30 billion** from investors, Anthropic has generated only **$5 billion** in revenue to date. By comparison, the costs of model training and inference alone have already consumed **$10 billion**. This disparity is forcing the company to implement desperate cost-cutting measures, such as reducing token limits during peak hours, which is rarely a sign of market strength.

Chinese dominance on the LLM hit lists

The greatest threat to Anthropic, however, is not their own spreadsheet, but the lightning-fast progress of laboratories from China. According to a report by the **US-China Economic and Security Review Commission**, Chinese companies have not only closed the gap with Western leaders but have begun setting architectural standards that the entire industry is now adopting. The scale of this phenomenon is best illustrated by the popularity ranking on the **OpenRouter** platform, which aggregates access to various models through a single API.

Read also

Currently, the entire top six most popular models come from China. They are, in order:

- MiMo-V2-Pro (Xiaomi)

- Step 3.5 Flash (stepfun)

- DeepSeek V3.2 (DeepSeek)

- MiniMax M2.7 (MiniMax)

- MiniMax M2.5 (MiniMax)

- GLM 5 Turbo (z.ai)

Anthropic's flagship products – Claude Opus 4.6 and Claude Sonnet 4.6 – have fallen to seventh and eighth place, respectively. Even more concerning is the decline in market share: in March 2025, Anthropic controlled 29.1% of queries, only to slide to just 13.3% a year later, on March 21, 2026. This is a drastic erosion of market position in just twelve months.

Economics vs. Ethics: A tenfold price difference

Anthropic's problem is not just performance, but above all, ruthless economics. Chinese competitors offer similar quality for a fraction of the price. An analysis conducted by Kilo Code showed that the MiniMax M2.7 model delivers 90% of the quality offered by Claude 4.6 Opus, while costing only 7% of its price. In absolute numbers, this means an expenditure of $0.27 instead of $3.67 for the same task.

Anthropic counters these attacks by accusing companies such as MiniMax, Moonshot AI, and DeepSeek of "distilling" their models—training them on output data generated by Claude. This is a risky argument, given that Anthropic's own models were built on content scraped from the web without the explicit consent of creators. Without political intervention and market protection, the company may not be able to maintain the margins necessary to achieve positive cash flow before going public.

Safety obsession paralyzes specialists

The most controversial aspect of Anthropic's strategy remains their approach to safety, which is beginning to resemble censorship that paralyzes professional applications. The company, which gained recognition by resisting pressure from the US Department of Defense to loosen security safeguards, is now losing users due to the overzealousness of its algorithms. The cybersecurity research community is canceling subscriptions en masse, complaining about a "stupid number of false positives."

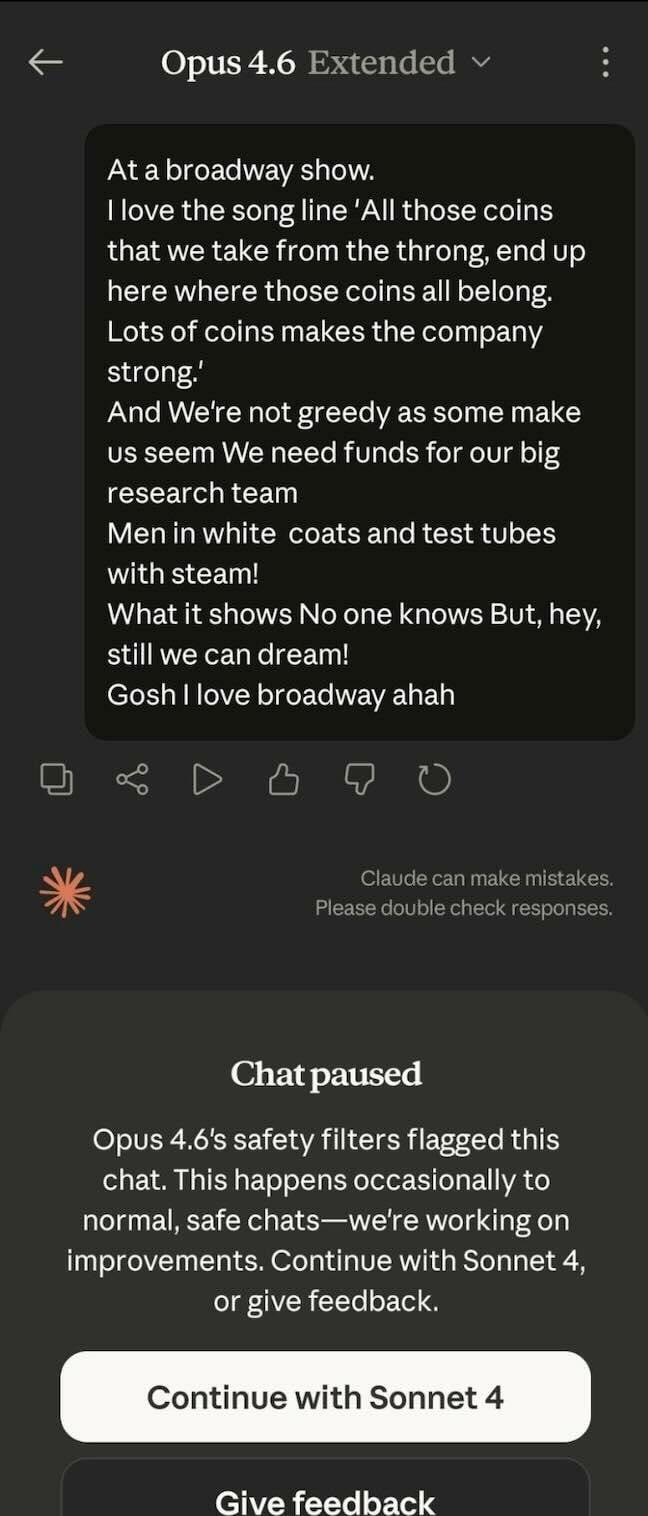

New safeguards introduced with the release of Opus 4.6 in February 2026 were intended to protect against CBRN (chemical, biological, radiological, and nuclear) misuse. In practice, however, these mechanisms are so sensitive that they can block a chat about the musical "Urinetown," deeming it a threat. For bug hunting and penetration testing experts, Claude has become a useless tool.

Anthropic admits that their guardrails may block legitimate defensive actions, offering a special form for professionals to request exemptions from blocks. However, the process is described as slow and lacking transparency. The result? Researchers who previously paid for Max subscriptions ($200 per month) are moving to Chinese alternatives. One interviewee explicitly points to MiniMax as a "distilled version of Claude" that is cheaper, just as good, and—crucially—does not avoid difficult topics in a research context.

If Anthropic does not find a middle ground between its mission of creating "harmless" AI and the brutal demands of the market and specialists, their 2026 IPO could turn out to be a painful collision with reality. In an industry where user loyalty ends where a tenfold higher bill or a blocked prompt begins, ethical purism may become the shortest path to market marginalization.

More from Industry

Broadcom agrees to expanded chip deals with Google, Anthropic

OpenAI asks California, Delaware to investigate Musk's 'anti-competitive behavior' ahead of April trial

Hope for a U.S.-Iran deal, Apple's anniversary, OpenAI's podcast deal and more in Morning Squawk

AI data center boom ‘stress tests’ insurers as private capital floods in

Related Articles

The Ridiculously Nerdy Intel Bet That Could Rake in Billions

Apr 6

Researchers didn’t want to glamorize cybercrims. So they roasted them

Apr 5

AI agents promise to 'run the business,' but who is liable if things go wrong?

Apr 5