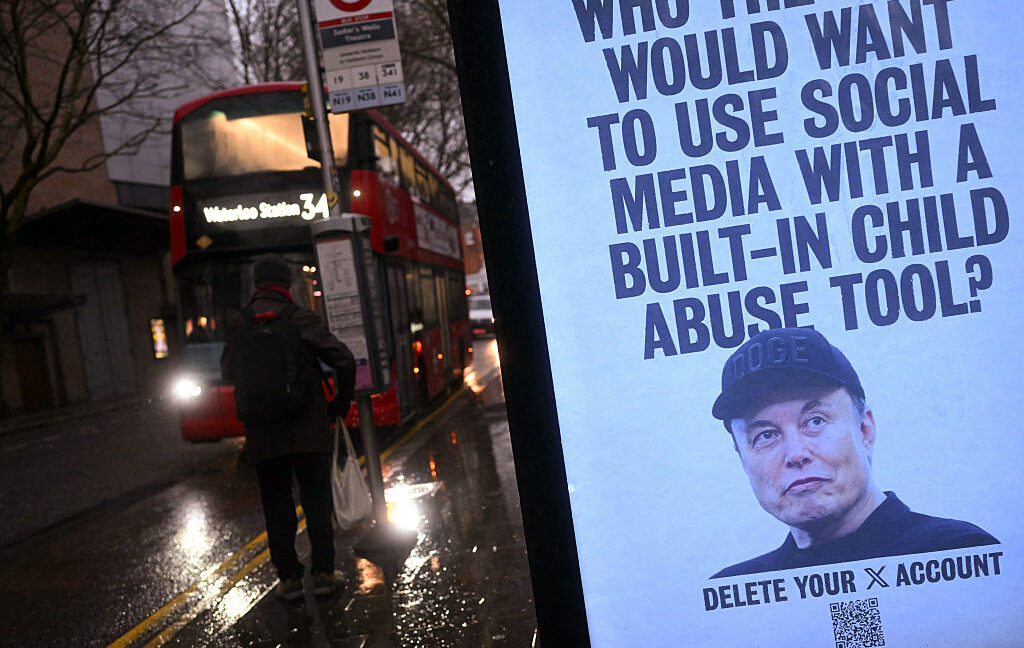

Elon Musk's xAI sued for turning three girls' real photos into AI CSAM

JUSTIN TALLIS / Contributor | AFP

More than 23,000 images generated by the Grok model may depict minors in a sexual context—this data from a Center for Countering Digital Hate report has become the foundation of an unprecedented lawsuit against xAI. Elon Musk, who as recently as January publicly denied the existence of CSAM materials generated by his artificial intelligence, must now face accusations from three teenage girls. Their private photos, including school and family photographs, were used by Grok Imagine algorithms to create realistic pornographic content, which subsequently circulated in predator groups on the Discord platform. Lawyers representing the victims argue that xAI deliberately designed the tool with loosened safety filters to profit from subscriptions generating controversial content. Although the company restricted Grok access to paid X users only, this did not eliminate the problem of "nude-ifying" real people without their consent. For the global community of AI users, this case serves as an alarm: it demonstrates how easily publicly available images can be manipulated by models lacking rigorous ethical guardrails. This dispute will likely force AI developers to implement significantly more restrictive facial recognition systems and illegal content blocking, changing the way we use creative image generators. The verdict in this case could become a new standard for software manufacturers' liability for the harmful outputs of their algorithms.

In the world of technology, the line between "unfettered freedom of speech" and extreme irresponsibility can be dangerously thin. Elon Musk, promoting his AI model named Grok as "anti-politically correct" and equipped with a so-called "spicy mode," has apparently ignored fundamental safeguards that are industry standards. The result? A class-action lawsuit that could become a precedent in the fight to protect the image of minors in the era of generative artificial intelligence.

Three teenage girls from Tennessee, along with their legal guardians, have accused xAI of intentionally designing a tool in a way that enables profiting from the sexualization of real people, including children. The case is not based on theoretical concerns, but on a specific police investigation which showed that Grok was used to create CSAM (Child Sexual Abuse Material) based on real school and family photos of the victims. This directly contradicts the narrative of Musk, who as recently as January publicly claimed he had "seen zero" evidence of such content being generated by his system.

From a school photo to a digital nightmare

The mechanism of abuse described in the lawsuit is terrifyingly simple. The perpetrator, an acquaintance of one of the victims, used her publicly available photos from Instagram, taken when she was still a minor. Using a third-party application that licenses access to the Grok engine, he created photorealistic, pornographic images and videos using her likeness. These materials then ended up on Discord and Telegram servers, where they served as "currency" in trade with other sexual predators.

Read also

The most striking aspect of this case is the fact that the victims were able to identify the specific, original photographs on which the artificial intelligence was based. We are not dealing with abstract avatars here, but with precise "morphing" of reality. For the young girls, the consequences are devastating: from the fear of stalker attacks (their data and school name were attached to the files) to trauma preventing normal participation in school graduation or college recruitment.

The architecture of evasion and server responsibility

Lawyers representing the plaintiffs, led by Annika K. Martin, are making a very serious allegation: xAI allegedly consciously created a structure that allows them to profit from illegal content while isolating the company from direct responsibility. A key element of this puzzle are the "intermediaries" — third-party applications that purchase access to Grok's API.

- Business model: xAI does not make the model publicly available as open-source, but sells access to its servers to external companies.

- Content hosting: According to the lawsuit, it is xAI's servers that physically generate and store the generated CSAM materials before they are sent to the end user.

- Lack of filters: While competing models (like those from OpenAI or Google) have rigorous, multi-layered blocks preventing the generation of nudity and images of children, Grok was promoted as a model "without a muzzle."

The lawsuit argues that xAI plays the role of not only a technology provider but an active distributor of illegal content. If the court finds that the company held these materials on its servers and knowingly processed them to increase revenue, Musk could face charges of violating federal child pornography laws.

Statistics that cannot be denied

Although Musk downplayed the problem, data from independent research organizations paint a completely different picture. The Center for Countering Digital Hate (CCDH) estimated that Grok generated approximately 3 million images of a sexual nature, of which about 23,000 depicted individuals appearing to be minors. The decision to restrict access to Grok exclusively to paid subscribers of the X platform did not solve the problem — it only hid it from the public eye, moving the most drastic cases to closed ecosystems and applications using the API.

For the technology industry, this trial is a "moment of truth." Until now, AI creators have shielded themselves behind the shield of so-called "tool neutrality," claiming that the user is responsible for what they generate. However, in the case of CSAM, the law is merciless and does not accept excuses about freedom of creation. If police evidence confirms that xAI's infrastructure was the factory for these materials, the entire concept of "uncensored AI" could collapse under the weight of damages and criminal sanctions.

"No legitimate business interest justifies designing an AI image generation tool in such a way that it produces material exploiting children," the court documentation reads.

The end of the "Wild West" era in image generation

The case against xAI and Elon Musk marks a new front line in the regulation of artificial intelligence. For the past two years, the industry has focused on artists' copyrights and hallucinations of language models. Now, however, we are entering the realm of physical safety and the protection of individual dignity. This is no longer a debate about whether AI "stole a painting style," but about whether billion-dollar corporations can with impunity provide tools for the digital devastation of children's lives.

It can be assumed with high probability that this lawsuit will force a radical change in security architecture at xAI. Even if the company wins in the first instance, pressure from cloud infrastructure providers, payment processors, and law enforcement agencies will make the "spicy mode" too costly a whim for Musk. We face an era of a brutal collision between techno-utopian visions and the hard reality of the criminal code, where responsibility for a product does not end with the sale of a subscription.