OpenAI Patches ChatGPT Data Exfiltration Flaw and Codex GitHub Token Vulnerability

Foto: The Hacker News

Nearly 18,000 GitHub tokens belonging to developers using the OpenAI Codex model could have been compromised by hackers due to a recently patched security vulnerability. A data exfiltration flaw in ChatGPT allowed sensitive user data to be transmitted to external servers controlled by attackers, utilizing unauthorized prompt injection. OpenAI responded to reports from security researchers by implementing fixes that block the possibility of information leakage through malicious scripts hidden in AI-generated results. For the global community of creators and companies using generative AI tools, this is a clear signal that integrating language models into the programming ecosystem carries the risk of cascading privacy breaches. The practical implications are obvious: users must exercise extreme caution when pasting sensitive code snippets into chats and must regularly rotate API keys. This incident forces organizations to move toward Zero Trust Network Access (ZTNA) architecture, which, instead of relying on traditional VPNs, isolates application access and minimizes the risk of lateral movement within the network. Security in the AI era is ceasing to be a matter of firewall configuration and is becoming a process of continuous monitoring of permissions for every generated token.

The security of artificial intelligence systems has ceased to be a domain of theoretical academic considerations and has become a real battlefield for user data. The latest findings by researchers from Check Point shed new light on the vulnerability of language models to data exfiltration attacks. As it turns out, a single precisely formulated, malicious prompt was enough to transform a standard session with ChatGPT into a hidden information transfer channel, exporting sensitive data outside the platform's secure environment.

The vulnerability, which OpenAI has already patched, allowed for the exfiltration of conversation history, uploaded files, and other confidential content without any knowledge or consent from the user. This critical breach demonstrates that the architecture of chatbots based on dynamic instruction processing carries unique risks that traditional firewalls are unable to fully mitigate. The scale of the threat was significant as it concerned not only real-time generated text but also resources that users entrusted to the system for document analysis.

The Mechanism of Silent Data Leakage

The threat identified by cybersecurity experts relied on manipulating the way the model interprets external commands. In an attack scenario, an attacker could inject instructions directing ChatGPT to encode fragments of the conversation and send them to an external server under the hacker's control. The entire process took place in the background, often masked as innocent image rendering operations or links, meaning the average user had no chance of noticing the anomaly in the browser interface.

Read also

Key aspects of the detected vulnerability included:

- Prompt Injection: A technique allowing the overriding of system security instructions using malicious user input or an external data source.

- Covert Channels: Creating hidden communication channels that utilize standard model functions (e.g., markdown) to transmit data to external domains.

- File Exfiltration: The ability to read and send the contents of PDF, DOCX, and other attachments that the user uploaded for analysis within a given thread.

This problem goes beyond simple code errors—it is a fundamental challenge related to how LLM (Large Language Models) treat input data. For the model, there is no clear boundary between "data to be processed" and "instructions to be executed," which opens the door for creative abuse of the system's logic.

The Issue of GitHub Tokens in the Codex Model

Parallel to the fixes in ChatGPT, OpenAI had to address another serious issue concerning the Codex model. A vulnerability related to GitHub tokens was detected, which could lead to unauthorized access to private code repositories. Given that Codex is the foundation for tools like GitHub Copilot, the risk of compromising the intellectual property of thousands of companies was real and immediate.

This vulnerability potentially allowed for the leakage of authentication keys that were stored or processed with insufficient security during code generation sessions. In the world of professional programming, where automation and AI assistants are becoming standard, the security of access tokens is a critical element of the software supply chain. OpenAI responded by implementing stronger credential isolation mechanisms and improving output filters designed to prevent the accidental disclosure of sensitive strings.

A New Era of Security: From VPN to ZTNA

These incidents are forcing organizations to redefine their data protection strategies in the era of widespread AI adoption. Traditional solutions, such as VPN, are proving insufficient against application-layer threats and model logic manipulation. The industry is increasingly leaning towards Zero Trust Network Access (ZTNA) architecture, which eliminates lateral movement and connects users directly to applications rather than the entire corporate network.

Implementing ZTNA in the context of using tools like ChatGPT allows for:

- Precise control over who can send data to external AI models and to what extent.

- Monitoring sessions for unusual behavior, such as attempts to establish connections with unknown domains during interaction with the bot.

- Ensuring that access to AI-based creative and programming tools occurs within strictly defined security policies.

The transition to comprehensive ZTNA is not just a matter of replacing technology, but a paradigm shift—from trusting the "inside" of the network to permanent verification of every query and every attempt to access resources.

The Evolution of Threats Requires a Proactive Stance

The described vulnerabilities in ChatGPT and Codex are proof that the pace of AI functionality development often outstrips the pace of security implementation. The fact that OpenAI responded quickly to Check Point's reports is an optimistic signal, yet it does not release corporate users from vigilance. Any tool that "understands" and interprets natural language is by definition vulnerable to semantic manipulation attempts.

In the face of the growing complexity of attacks, the only effective strategy becomes the "assume breach" mentality. This means the necessity of using advanced content moderation filters, DLP (Data Loss Prevention) systems integrated with AI interfaces, and continuous employee education regarding the risks associated with shadow AI. Security in the era of generative artificial intelligence is not a final state, but a process of continuous adaptation to increasingly sophisticated data exfiltration methods.

It can be assumed with high probability that we will witness more discoveries of this type in the near future. The line between a secure tool and an attack vector is becoming thinner, which will force AI technology providers to integrate defense mechanisms even more deeply directly into the model architecture, rather than relying solely on external protective layers.

More from Security

$285 Million Drift Hack Traced to Six-Month DPRK Social Engineering Operation

36 Malicious npm Packages Exploited Redis, PostgreSQL to Deploy Persistent Implants

Fortinet Patches Actively Exploited CVE-2026-35616 in FortiClient EMS

China-Linked TA416 Targets European Governments with PlugX and OAuth-Based Phishing

Related Articles

How LiteLLM Turned Developer Machines Into Credential Vaults for Attackers

Apr 6

Qilin and Warlock Ransomware Use Vulnerable Drivers to Disable 300+ EDR Tools

Apr 6

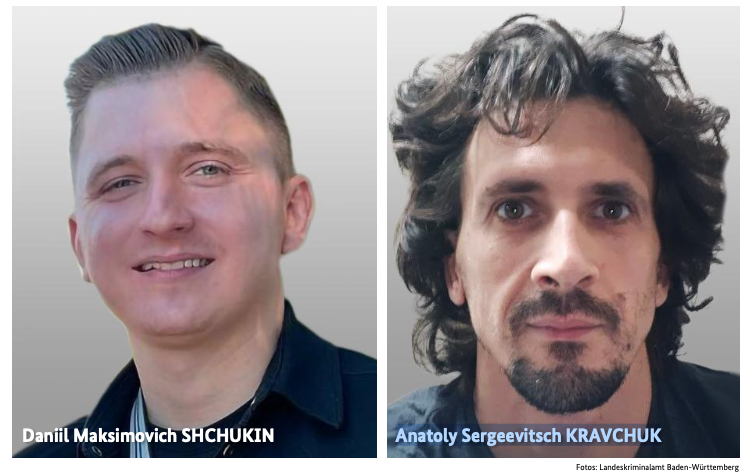

BKA Identifies REvil Leaders Behind 130 German Ransomware Attacks

Apr 6