Vertex AI Vulnerability Exposes Google Cloud Data and Private Artifacts

Foto: The Hacker News

Cybersecurity researchers have disclosed a security "blind spot" in Google Cloud's Vertex AI platform that could allow artificial intelligence (AI) agents to be weaponized by an attacker to gain unauthorized access to sensitive data and compromise an organization's cloud environment. According to Palo Alto Networks Unit 42, the issue relates to how the Vertex AI permission model can be misused

In a world where artificial intelligence is becoming the operational foundation of modern enterprises, the security of the platforms processing this data is becoming a top priority. Researchers from the Palo Alto Networks Unit 42 team have revealed a critical security flaw in the Vertex AI platform belonging to Google Cloud. The discovered vulnerability, referred to as a "security blind spot," sheds new light on the risks associated with implementing AI agents in cloud environments.

The problem does not stem from a bug in the code itself, but from the fundamental way the permission model within Vertex AI was constructed. Exploiting this flaw allows a potential attacker to weaponize AI agents in such a way that they gain unauthorized access to an organization's sensitive data. In the worst-case scenario, this could lead to the complete takeover of private artifacts and the compromise of a company's entire cloud ecosystem, which constitutes a catastrophic scenario in the era of rigorous data protection regulations.

The mechanism of Vertex AI permission model abuse

The key to understanding the threat is the specific way AI agents operate, using broad permissions to efficiently process queries and interact with various Google Cloud services. According to the Unit 42 report, an attacker can manipulate an agent's decision-making processes, forcing it to go beyond its defined scope of duties. By exploiting gaps in permission configurations, an external entity is able to trick the system into exfiltrating data that theoretically should not be accessible to anyone outside a narrow group of administrators.

Read also

The most disturbing aspect of this discovery is the fact that traditional monitoring tools may not detect this type of activity. Because operations are performed by authorized Vertex AI instances, security systems often classify them as legitimate traffic within the infrastructure. It is this invisibility that leads researchers to speak of a "blind spot" – a place where standard security policies stop working, and control is taken over by the logic of the AI model, which can be relatively easily bent for criminal purposes.

In practice, this means that private artifacts, such as machine learning models, training datasets, or API keys stored within Google Cloud, become vulnerable to theft. For technology companies whose value is based on the intellectual property contained in algorithms, such data exposure poses an existential threat. Palo Alto Networks emphasizes that without a deep revision of how AI agents interact with cloud resources, similar incidents will recur.

Evolution of access: from VPN to Zero Trust Network Access

The discovered vulnerability in Vertex AI fits into a broader trend of the necessity to modernize access to digital resources. Traditional solutions, such as VPN, are becoming insufficient in the face of such advanced internal threats and lateral movement. Instead of relying on identity verification only at the "entrance" to the network, modern organizations must implement a comprehensive Zero Trust Network Access (ZTNA) approach, which eliminates default trust even for processes occurring within the cloud.

- Elimination of lateral movement: ZTNA connects users and AI processes directly to applications, rather than the entire network, which limits an attacker's room for maneuver after compromising a single element.

- Granular permission control: Every interaction of an AI agent with data in Google Cloud should be verified against the Principle of Least Privilege.

- Continuous contextual monitoring: Security systems must analyze not only "who" has access, but "how" and "for what purpose" data is being processed by Vertex AI models.

The transition from a VPN-based model to full ZTNA allows for the creation of a more secure environment for artificial intelligence development. In the context of Vertex AI, this means the necessity to isolate agents from critical resources that are not essential for their functioning. Without such separation, even the most advanced Google Cloud defense mechanisms can be bypassed through the creative use of system permissions.

Security architecture in the era of autonomous agents

The analysis by Palo Alto Networks Unit 42 suggests that the problem with Vertex AI is only the tip of the iceberg. As AI agents become more autonomous, their ability to perform actions on behalf of the user grows, and with it, the risk of abuse. The cloud security model must evolve toward dynamic machine identity management. Currently, many organizations make the mistake of treating AI agents as static tools, while in reality, they are dynamic operational entities with broad capabilities to modify their behavior.

"AI security is not just about protecting the model from adversarial attacks; it is primarily about securing the infrastructure on which these models operate." – industry experts point out, commenting on the Vertex AI case.

It is necessary to introduce an intermediary layer that will audit queries generated by Vertex AI agents before they are executed in the production environment. These types of "guardrails" can block attempts to access sensitive artifacts, even if system permissions theoretically allow it. This approach is essential to close the "blind spots" identified by security researchers.

A new standard for AI resource protection

The Vertex AI incident should be a wake-up call for all Chief Information Security Officers (CISOs). Secure implementation of artificial intelligence requires more than just choosing a reputable cloud provider. It requires active auditing of IAM (Identity and Access Management) configurations and an understanding that every new AI feature introduces a unique attack vector. Google Cloud regularly updates its systems; however, the responsibility for proper configuration and privilege limitation rests largely with the end user.

In my view, we are at the dawn of a new era of cybersecurity, where the battle will be fought over the integrity of AI agent logic. If we do not learn to effectively isolate artificial intelligence decision-making processes from the core of our data, we risk that the most powerful productivity tool we have ever created will become our greatest security challenge. The future of data protection in the cloud will not belong to those who build the highest walls, but to those who can most precisely manage trust within their systems.

More from Security

$285 Million Drift Hack Traced to Six-Month DPRK Social Engineering Operation

36 Malicious npm Packages Exploited Redis, PostgreSQL to Deploy Persistent Implants

Fortinet Patches Actively Exploited CVE-2026-35616 in FortiClient EMS

China-Linked TA416 Targets European Governments with PlugX and OAuth-Based Phishing

Related Articles

How LiteLLM Turned Developer Machines Into Credential Vaults for Attackers

Apr 6

Qilin and Warlock Ransomware Use Vulnerable Drivers to Disable 300+ EDR Tools

Apr 6

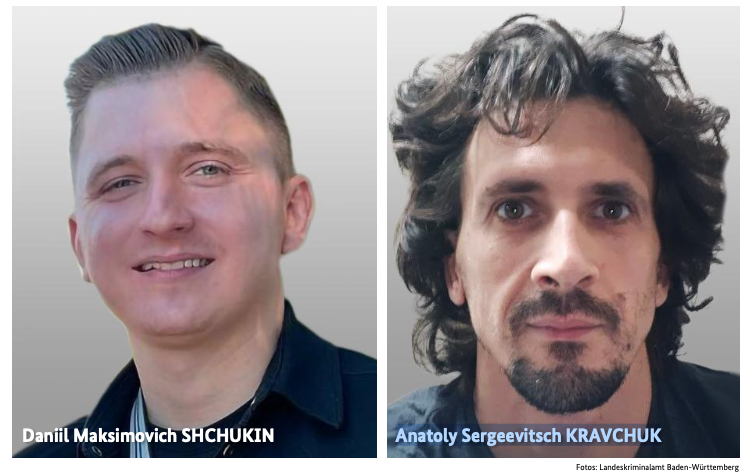

BKA Identifies REvil Leaders Behind 130 German Ransomware Attacks

Apr 6