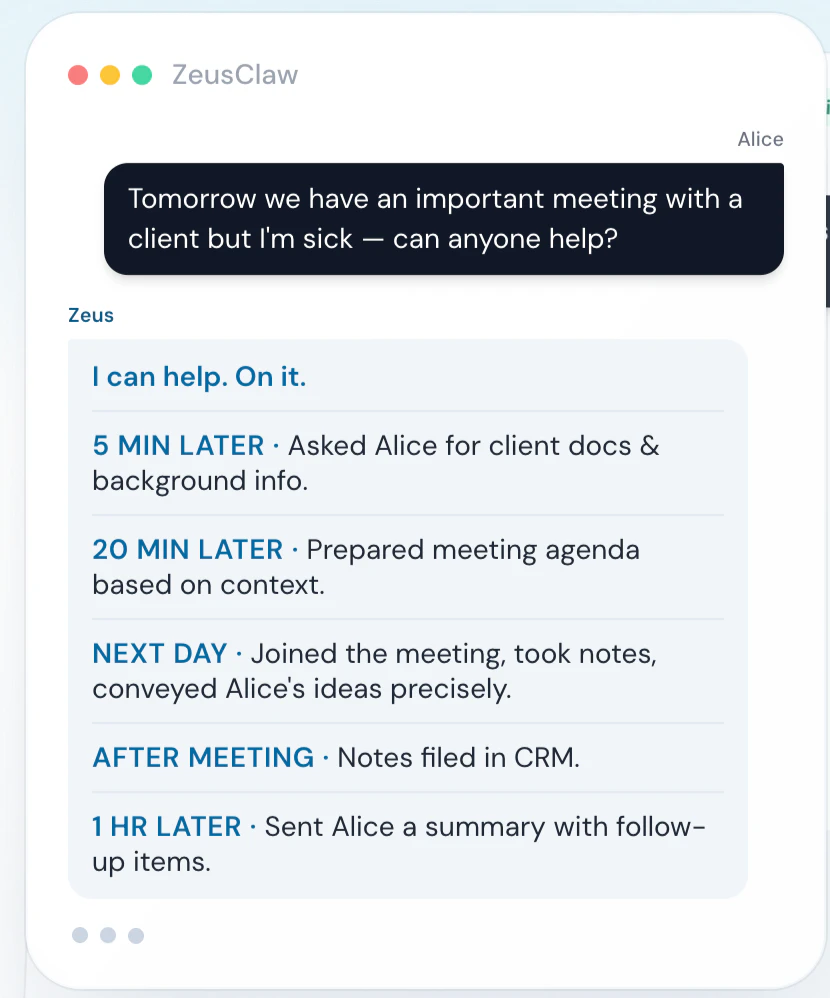

Zeus

Foto: Product Hunt AI

A reduction in energy consumption of over 40% alongside a 25% increase in model training speed places the Zeus framework at the forefront of the green AI revolution. Developed by researchers, this solution addresses one of modern technology's greatest challenges: the massive carbon footprint generated by NVIDIA GPUs during Deep Learning optimization. The system operates dynamically, automatically selecting GPU power limits and batch size parameters to find the ideal balance between runtime and electricity consumption—a process that has been tedious and manual until now. For creators and engineers working with AI, Zeus represents a tangible reduction in operational costs without the need for drastic sacrifices in performance. In an era of rising energy prices and increasingly stringent sustainability regulations, this tool is becoming an essential link in the production pipeline. The practical implementation of the framework allows Machine Learning projects to scale in a more ethical and economical manner, eliminating the resource waste that has plagued the industry for years. Rather than a blind pursuit of maximum computing power, this technology introduces intelligence into infrastructure management, making the model development process more financially predictable. The effective implementation of such solutions provides the foundation for the further, unconstrained growth of generative artificial intelligence.

In a world dominated by giants like OpenAI or Google, a solution rarely appears that doesn't try to be another "assistant for everything," but instead hits a specific, painful point of modern data engineering. Zeus enters the scene as a tool with the ambition to organize informational chaos before it can paralyze decision-making processes in AI-driven companies. This is not just another language model; it is a platform designed for maximum performance and precision in knowledge flow management.

The key to understanding the Zeus phenomenon is recognizing the gap between raw data and its utility in RAG (Retrieval-Augmented Generation) systems. While most developers focus on the parameters of the models themselves, the creators of Zeus focused on the foundation: structure and accessibility. In an era where AI hallucinations are the biggest implementation barrier, Zeus offers an architecture that minimizes the risk of errors through rigorous context control and intelligent resource indexing.

Architecture based on lightning-fast processing

At the heart of the Zeus system is a proprietary query optimization engine that allows for a drastic reduction in response time while maintaining a massive scale of searched datasets. Unlike traditional vector bases, which often become a bottleneck under high load, Zeus utilizes a hybrid approach to information processing. It combines classic semantic search with an advanced categorization layer, allowing AI models to "understand" the document structure even before text generation begins.

Read also

For AI engineers, this means an end to the tedious data cleaning process before every deployment. Zeus automates the ingestion process, recognizing key connections between dispersed files. This system doesn't just index text; it creates a dynamic dependency map that evolves as new information is added to the repository. This approach makes integration with popular frameworks like LangChain or LlamaIndex a formality rather than a programming challenge.

Precision over model size

There is a misconception in the tech industry that a larger model always means better results. Zeus challenges this thesis, proving that a properly prepared context window is worth more than billions of additional parameters in a neural network. By applying Context Distillation techniques, this tool can extract the most important fragments from thousands of pages of documentation, delivering only what is essential to the LLM to provide a correct answer.

- Intelligent Chunking: Zeus does not cut text in random places; it analyzes the logical structure of paragraphs and sections.

- Dynamic Ranking: Search results are sorted not only by cosine similarity but also by the freshness and credibility of the source.

- Low Latency: An architecture optimized for cloud infrastructure allows for real-time results, which is crucial for customer service systems.

Such specifications make Zeus an ideal choice for sectors with high legal and technical rigor, such as finance or medicine. Where a mistake of one zero or a misinterpretation of a procedure can cost a fortune, the precision offered by this platform becomes a standard, not a luxury. The system allows for full auditability — the user always knows exactly which source fragment the AI-generated information comes from.

Integration and scalability in the AI ecosystem

One of the strongest points of Zeus is its versatility. From the beginning, the creators focused on open standards, allowing for the painless integration of the platform into existing tech stacks. Regardless of whether a company uses GPT-4, Claude 3.5 Sonnet, or local solutions like Llama 3, Zeus acts as an independent data intelligence layer that feeds these models with verified knowledge.

It is worth noting the economic aspect of a Zeus implementation. By drastically reducing the number of tokens sent in each query (thanks to better context filtering), the platform realistically lowers the operational costs of using paid APIs. Instead of sending entire documents to the model in hopes of finding an answer, developers use Zeus to precisely cut a "surgical" fragment of information, translating into savings of 30-50% per month.

"The true power of artificial intelligence lies not in its ability to speak, but in its ability to know where to look for the truth. Zeus is the missing link in this process."

Looking at the direction the industry is heading, platforms like Zeus will become the foundation of a new generation of software. The era of experimenting with chatbots is ending; the era of professional agentic systems that must operate on facts is beginning. Zeus provides the infrastructure that makes these facts accessible, secure, and ready for use in a fraction of a second. This is not just an evolution of developer tools — it is a redefinition of how AI systems interact with human knowledge.

It is safe to assume that in the coming months, we will see a surge of solutions built directly on the Zeus engine. The competitive advantage provided by instant access to precise data is too great to be ignored by leaders in creative and analytical technologies. This platform sets a new standard in the field of "Data Intelligence," prioritizing quality, which ultimately always wins over quantity.

More from Tech

Related Articles

After court loss, RFK Jr. gives himself more power over CDC vaccine panel

Apr 6

Steven Spielberg Still Wants to Make a Horror Film ‘Someday’

Apr 6New Jersey has no right to ban Kalshi's prediction market, US appeals court rules

Apr 6