AI Flaws in Amazon Bedrock, LangSmith, and SGLang Enable Data Exfiltration and RCE

Foto: The Hacker News

Security researchers have discovered critical vulnerabilities in popular AI platforms — Amazon Bedrock, LangSmith, and SGLang — that enable data theft and remote code execution. The vulnerabilities concern how these systems handle prompts and interactions with language models, allowing attackers to bypass security measures and gain access to sensitive information. The problem is particularly serious for enterprises using these tools to process customer data and conduct internal operations. The vulnerabilities allow for so-called prompt injection — the injection of malicious instructions that force the model to disclose data or execute unauthorized operations. Experts recommend the immediate implementation of Zero Trust Network Access (ZTNA) — a model in which every access to applications and resources requires verification, regardless of user location. Such an approach eliminates the possibility of lateral movement within the network and limits potential damage in case of compromise. Enterprises should urgently update their systems and conduct security audits of their AI platforms.

AI system security has become one of the most challenging issues in the modern technology industry. While giants like Amazon, Anthropic, and OpenAI invest billions in developing advanced language models, their infrastructure — especially code sandboxes and execution environments — proves vulnerable to attacks that can lead to complete system takeover. A discovery made by researchers from BeyondTrust reveals an alarming vulnerability: attackers can use ordinary DNS queries to exfiltrate sensitive data and obtain interactive system shells on servers hosting popular AI platforms.

This is not an ordinary vulnerability discovery. It concerns a fundamental design flaw affecting three key components of the AI ecosystem: Amazon Bedrock, LangSmith, and SGLang. Each of them is widely used by enterprises, startups, and researchers worldwide. The vulnerability allows for something worse than data leakage — it enables complete remote control over execution environments where API keys, model training data, or sensitive customer information may be stored.

The Polish technology industry, increasingly interested in integrating AI tools into its products, should pay special attention to this vulnerability. Many Polish startups and IT companies use Amazon Web Services and tools related to the LangChain ecosystem (to which LangSmith belongs). This means that this vulnerability can directly impact the security of systems being developed in Poland.

Read also

How DNS Became a Tool for Attacking AI Sandboxes

Traditionally, code sandboxes are designed to isolate code executed by users from the rest of the system. However, Amazon Bedrock AgentCore Code Interpreter in sandbox mode allows outgoing DNS queries — something that at first glance seems harmless. After all, DNS is just a system for translating domain names into IP addresses. Could this really pose a threat?

The answer is astonishing: yes. Researchers from BeyondTrust demonstrated that DNS can be used as a communication channel for data exfiltration. Instead of sending data through traditional network connections (which are usually monitored and filtered), attackers can encode sensitive information in DNS queries. A DNS server controlled by the attacker receives these queries and logs them — thereby gaining access to data without needing to establish a direct TCP/UDP connection.

The mechanism is ingeniously simple. Suppose an attacker wants to steal an environment variable containing an API key. Code executed in the sandbox can send a DNS query in the form of: exfiltrated-api-key-xyz123.attacker.com. The attacker's DNS server receives this query and logs its entire contents. The sandbox cannot block this because DNS queries are allowed — they are necessary for normal internet operation.

What makes this vulnerability particularly dangerous is the fact that most security systems do not monitor DNS queries at a sufficiently detailed level. Administrators typically focus on blocking outgoing connections to suspicious ports or IP addresses, but allow harmless DNS traffic. BeyondTrust researchers demonstrated that this assumption — that DNS is safe — is fatal.

RCE Through Interactive System Shells

Data exfiltration is just the beginning of the problem. BeyondTrust's discovery reveals something far more alarming: the possibility of obtaining Remote Code Execution (RCE) — remote execution of arbitrary code on the server. This means that attackers can not only steal data but also gain complete control over the execution environment.

The mechanism works as follows: after establishing a communication channel through DNS, attackers can send system commands encoded in DNS queries. The sandbox, which allows outgoing DNS queries, has no way to block them. In this way, attackers gain an interactive system shell — effectively a terminal from which they can execute any commands on the server.

Given that Amazon Bedrock is a service managed by AWS and handles many sensitive business workloads, an RCE scenario is catastrophic. Attackers can:

- Modify AI models or training data

- Intercept all user requests processed by AI agents

- Install persistence mechanisms — mechanisms maintaining access even after session closure

- Laterally spread to other systems in the corporate network

- Gain access to sensitive documents and data stored in S3 buckets

This is not a hypothetical threat. BeyondTrust researchers demonstrated practical attacks that successfully gained access to system shells. This means that every organization using Amazon Bedrock to run AI agents — and there are thousands of such organizations — is potentially at risk.

LangSmith: A Vulnerability in Debugging and Monitoring

LangSmith, owned by LangChain, is a tool for debugging, monitoring, and optimizing applications based on language models. It is extremely popular among developers building chatbots, AI assistants, and autonomous agents. The platform allows tracking every step of chain execution, which is invaluable for debugging complex AI systems.

However, the same transparency that makes LangSmith such a useful tool proves to be its weakness. Researchers discovered that LangSmith does not fully protect communication between client and server. Sensitive information — such as system prompts, API keys, user data — can be logged and stored insecurely.

For Polish developers working with LangChain (and there are increasingly more of them), this has concrete consequences. If they build an AI application that integrates with LangSmith for monitoring, they may unknowingly send sensitive data to LangChain servers. Although LangChain claims that data is encrypted and secured, the vulnerability discovered by BeyondTrust shows that this platform's security has serious gaps.

Particularly problematic is that many organizations are unaware of exactly what data reaches LangSmith. System prompts containing business instructions, training data, and even customer information can be logged automatically. If someone gains access to a LangSmith account — through phishing, weak password, or precisely through the DNS vulnerability — they will have access to the entire execution history of the AI application.

SGLang: A Sandbox That Does Not Protect

SGLang is a lesser-known but equally important tool in the AI ecosystem. It is a framework for optimizing and executing language programs on models such as GPT or Llama. It is used primarily by researchers and advanced developers who want full control over the model execution process.

The vulnerability in SGLang is similar to that in Amazon Bedrock — the sandbox allows outgoing DNS queries that can be exploited for data exfiltration and RCE. However, SGLang is often run locally or in research environments where network security may be less rigorous. This means that the vulnerability in SGLang can be particularly dangerous for academic laboratories, startups, and R&D teams that may store sensitive data about new models or proprietary algorithms.

Given that SGLang is an open-source project, the vulnerability may have been known in the community for some time. However, the fact that BeyondTrust had to formally disclose it suggests that a fix was not a priority for the project maintainers. This is a classic problem in open-source tools — security often takes a backseat to functionality.

Impact on Polish Enterprises and Startups

The Polish technology industry is rapidly adopting AI tools. Many Polish startups and IT companies are building applications based on Amazon Bedrock, LangChain/LangSmith, and other tools from the LLM ecosystem. The vulnerability discovered by BeyondTrust has concrete implications for them.

First, every Polish company using Amazon Bedrock to build AI agents should immediately review its security configuration. AWS should provide a patch or recommendations for limiting outgoing DNS queries from sandboxes. However, in practice, many small companies may not have the resources to quickly implement such a change.

Second, companies using LangSmith for monitoring should analyze what data reaches this platform. If they are sending sensitive information there, they should consider alternative solutions or implement additional encryption at the application level.

Third, research teams working with SGLang should be aware of the threat and implement additional network security controls — for example, blocking outgoing DNS queries from machines running SGLang.

The Polish cybersecurity community — including companies such as Eset, Asseco, or smaller firms specializing in security consulting — should start offering security audit services for AI applications. This will be a growing market, especially now that such fundamental vulnerabilities are being disclosed.

Zero Trust Architecture as a Response

BeyondTrust in its report emphasizes the importance of Zero Trust architecture as protection against this type of attack. Zero Trust is an approach to security that assumes that no network traffic — even within an organization — should be trusted without verification.

In the context of AI sandboxes, Zero Trust means:

- Blocking all outgoing connections from the sandbox unless explicitly allowed

- Monitoring every DNS query and filtering suspicious queries

- Network segmentation so AI sandboxes are isolated from the rest of the infrastructure

- Logging and auditing every operation performed in the sandbox

- Implementing microsegmentation — each AI agent has access only to resources it actually needs

This approach is more demanding than traditional perimeter security (firewall + VPN), but it is significantly more effective. Especially for organizations that intensively use AI and want to minimize risk.

Amazon, Anthropic, and other companies should implement Zero Trust by default in their AI platforms. However, in practice, many of them prioritize functionality and ease of use over security. This leaves users in a difficult situation — they must implement additional security controls themselves to protect against vulnerabilities in the infrastructure.

A Lesson from the Past: Why Sandbox Security Is So Difficult

This is not the first time sandboxes have proven vulnerable to attacks. The history of computer security is full of examples where isolation that was supposed to be secure turned out to have fundamental flaws. From Java applets in the 1990s, through web browsers, to modern Docker containers — every sandbox technology has had its vulnerabilities.

The problem is that sandboxes must be useful — they must allow certain operations for code to execute. However, every operation that is allowed is a potential attack vector. DNS is a perfect example: it is essential for normal internet operation, but can be abused for data exfiltration.

Another classic example is System V IPC (Inter-Process Communication) in Linux. Sandboxes that do not restrict access to IPC can be bypassed through communication with processes outside the sandbox. Similarly, file system access can be abused to read sensitive data, even if the sandbox is supposed to be isolated.

Security researchers have long known that designing secure sandboxes is extremely difficult. It requires deep knowledge of operating systems, networking, security, and application architecture. However, many AI teams building these sandboxes do not have such expertise. The result is vulnerabilities like the one discovered by BeyondTrust.

What Users Should Do Now

For organizations already using Amazon Bedrock, LangSmith, or SGLang, there are several steps they should take immediately:

- Review security configuration: Check what firewall rules and security groups are set for AI sandboxes. Ensure that outgoing DNS queries are monitored or restricted.

- Rotate sensitive data: If you used Amazon Bedrock, change all API keys and passwords that could have been exposed. Assume that every environment variable you set in the sandbox could have been intercepted.

- Implement Zero Trust: Instead of relying on the sandbox for isolation, implement additional security controls at the network and application level.

- Monitoring and alerting: Configure tools to monitor anomalous DNS queries and outgoing connections from sandboxes.

- Data audit: If you sent data to LangSmith, analyze exactly what was logged. Consider deleting history or switching to an on-premise solution.

For organizations planning to implement AI, this vulnerability should be a lesson: do not rely on security provided by the vendor. Always implement additional layers of security, monitor network traffic, and assume that every sandbox can be bypassed.

The Polish technology industry, which is increasingly investing in AI, must be aware of these threats. It is not enough to simply integrate tools such as Amazon Bedrock or LangChain — they must be integrated securely, with full understanding of potential attack vectors.

More from Security

$285 Million Drift Hack Traced to Six-Month DPRK Social Engineering Operation

36 Malicious npm Packages Exploited Redis, PostgreSQL to Deploy Persistent Implants

Fortinet Patches Actively Exploited CVE-2026-35616 in FortiClient EMS

China-Linked TA416 Targets European Governments with PlugX and OAuth-Based Phishing

Related Articles

How LiteLLM Turned Developer Machines Into Credential Vaults for Attackers

Apr 6

Qilin and Warlock Ransomware Use Vulnerable Drivers to Disable 300+ EDR Tools

Apr 6

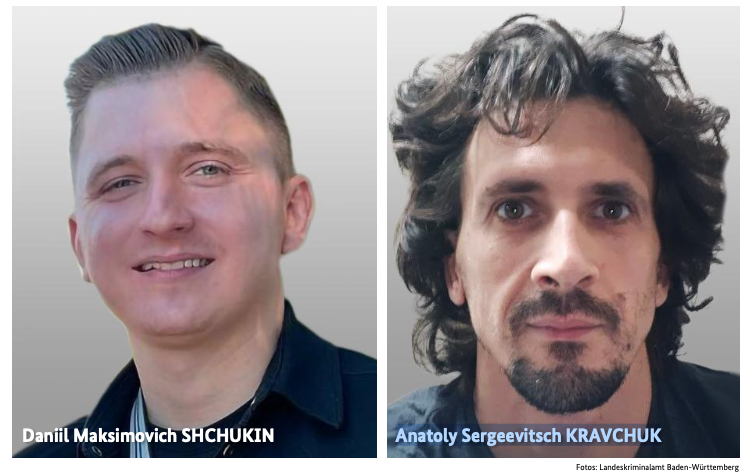

BKA Identifies REvil Leaders Behind 130 German Ransomware Attacks

Apr 6