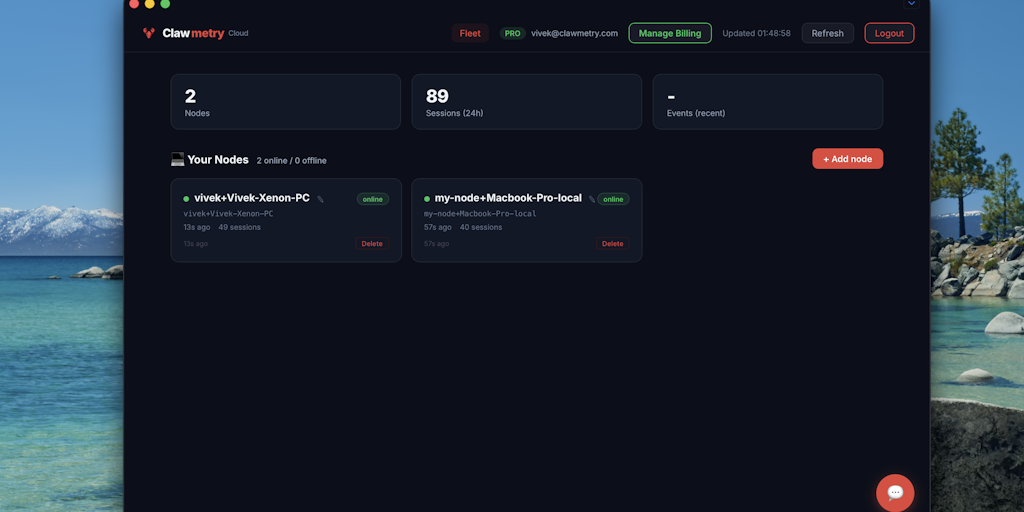

ClawMetry Cloud

Foto: Product Hunt AI

ClawMetry Cloud is a new tool for real-time monitoring of OpenClaw AI agents. The dashboard offers full observability of artificial intelligence operations, allowing developers to track processes, performance, and potential issues live. The solution launched on Product Hunt as a response to growing industry needs — as AI agents expand, demand for diagnostic tools increases. ClawMetry enables quick identification of bottlenecks and anomalies in autonomous systems. For practicing engineers, this means the ability to debug complex AI processes without having to dive into raw logs. The dashboard interface allows for immediate problem detection, which shortens incident resolution time and improves the stability of production systems. The tool represents a step toward more transparent and manageable systems based on AI agents — critically important as businesses increasingly rely on autonomous decision-making processes.

In the world of artificial intelligence, observing what happens inside an AI agent in real time is still an insufficiently solved problem. Most developers working with advanced AI models must settle for text logs, fragmented reports, and retrospective analysis — all after the process has already ended. ClawMetry Cloud changes this perspective, offering the first dashboard on the market for monitoring OpenClaw agents with real-time observability. This solution recently landed on Product Hunt, where it caught the attention of the AI and automation community.

What exactly is ClawMetry Cloud and why should it interest Polish developers and teams working with AI agents? The answer lies in the fundamental problem faced by anyone trying to implement advanced autonomous systems: without insight into what the agent is doing, when it's doing it, and why it makes specific decisions, debugging becomes a game in the dark. ClawMetry changes this dynamic by providing a tool that allows you to track every step, every decision, and every interaction the agent has with its environment.

Observability as a strategic element of AI development

Observability — or as engineers prefer to say, observability — is a concept that for years was reserved for cloud infrastructure and distributed systems. However, as the complexity of AI agents based on models like GPT or Claude has grown, observability has become just as important for AI as it is for traditional IT infrastructure. Without it, a developer only has access to input (prompt) and output (agent response), but completely loses insight into the thinking process and decision-making.

Read also

ClawMetry Cloud understands this problem deeply. The platform was built specifically for the OpenClaw ecosystem — a framework for building autonomous AI agents that is gaining popularity among developers seeking an alternative to more general solutions. Integration with OpenClaw allows ClawMetry to perform deep instrumentation of agents without requiring users to write additional code or deal with complex configurations.

The real value of observability in this context is the ability to quickly identify where the agent is going wrong. Does the problem lie in prompt interpretation? In tool selection? Or perhaps in decision logic? ClawMetry provides answers to these questions in real time, instead of forcing developers to analyze logs after the fact.

What exactly can you see on the ClawMetry dashboard?

The ClawMetry Cloud dashboard is not just a simple table with logs. It's an interactive visual interface that allows you to track:

- Agent decision flow — every step the agent takes, from prompt analysis through tool selection to response generation

- Tool and API usage — which external systems the agent called, what parameters were used, and what the results were

- Execution times — precise measurements of the time for each stage, allowing you to identify bottlenecks

- Performance metrics — indicators such as percentage of successful calls, average response time, or number of attempts needed to get the correct result

- Errors and anomalies — the system automatically flags abnormal behavior and suggests possible causes

- Interaction history — complete record of every conversation with the agent, along with context and state changes

What distinguishes ClawMetry from general monitoring tools is the fact that every element is tailored to the specifics of AI agents. We don't have to look at raw system metrics — instead we see abstractions that are actually important to those building and optimizing agents.

Integration with OpenClaw — how it works in practice?

One of the biggest advantages of ClawMetry Cloud is its seamless integration with OpenClaw. Instead of requiring developers to manually instrument code, ClawMetry simply connects and starts collecting data. This "plug-and-play" approach means that teams can start monitoring their agents almost immediately.

In practice, this means a developer writes an agent in OpenClaw in the standard way, and ClawMetry automatically captures all relevant events. There's no need to add logging manually, no need for complicated configuration — the platform simply knows what to look for and how to visualize it. This is particularly important for small teams and startups that don't have the resources to build their own observability systems from scratch.

For more advanced users, ClawMetry also offers APIs and webhooks that allow integration with other tools in the ecosystem. For example, it's possible to send alerts to Slack when an agent encounters a problem, or export data to your own analytics systems.

Competition and market position

The market for AI agent observation tools is relatively young, but developing rapidly. General platforms for monitoring AI applications already exist, such as Langsmith or Arize, but ClawMetry occupies a niche — it's a dedicated solution for a specific framework. This approach has both advantages and disadvantages.

The advantage is the depth of integration. Because ClawMetry knows OpenClaw perfectly, it can offer features and observability detail that general platforms will never be able to provide. The disadvantage is obviously the limitation — if you're using a different framework to build agents, ClawMetry won't be a solution for you. However, for teams engaged in the OpenClaw ecosystem, this specialization is an advantage.

It's also interesting that ClawMetry appeared on Product Hunt, which suggests that the team behind the project wants to build a community around the tool. This is a smart strategy — developer tools live through adoption and word-of-mouth, and Product Hunt is the ideal place to reach early adopters and influencers in the AI industry.

Use cases — where ClawMetry really shines

To understand the value of ClawMetry, it's worth looking at specific scenarios where this tool changes the game. Imagine you're responsible for an AI agent that handles customer service for an e-commerce business. The agent should classify queries, search a database for information, and generate responses. When something goes wrong — and something always goes wrong — you need to quickly identify the problem.

Without ClawMetry, debugging would be tedious: you'd check logs, try to reproduce the problem, analyze what went wrong. With ClawMetry, you see exactly the moment when the agent made a mistake. Maybe instead of searching the database, it selected the wrong tool? Maybe it misinterpreted the customer's query? The dashboard shows you this immediately, along with suggestions on how to fix it.

Another example: performance optimization. If an agent is running slowly, ClawMetry allows you to see where time is being wasted. Is the problem in API calls? In data processing? In text generation by the model? With precise time metrics, you can make informed decisions about optimization.

Technical aspects and architecture

From a technical perspective, ClawMetry Cloud must deal with several challenges. First, it must collect huge amounts of data in real time without degrading agent performance. Second, it must store, index, and present this data in the dashboard in a way that queries are fast even for large amounts of historical data.

The Cloud architecture suggests that ClawMetry uses distributed systems to handle this load. Data from agents is likely sent to a central server, where it's processed and stored. The dashboard is then a web application that queries this data. This approach makes sense because it allows for scaling — as more teams use ClawMetry, the infrastructure can grow without affecting service quality for individual users.

The issue of security and privacy is crucial here. AI agents can process sensitive data — customer data, trade secrets, proprietary information. ClawMetry must ensure that this data is securely transmitted, encrypted at rest, and accessible only to authorized users. So far we don't have full details on how ClawMetry approaches security, but for a product aimed at enterprises, this will be key.

Implications for the Polish AI market

On the Polish market, we're observing dynamic growth in interest in AI agents and automation. More and more companies are experimenting with implementing advanced AI systems, but they lack tools to monitor and debug them. ClawMetry Cloud can fill this gap, especially for teams that have chosen OpenClaw as their framework.

For Polish AI startups, a tool like ClawMetry can be a game-changer. It allows them to iterate faster, better understand the behavior of their agents, and ultimately reach a product that actually works more quickly. For larger companies, ClawMetry offers a tool for monitoring production agents, which is crucial for maintaining system reliability.

It's also worth noting that ClawMetry's appearance on Product Hunt is a signal that the OpenClaw ecosystem is attracting more and more attention from tool builders and infrastructure developers. This in turn suggests that OpenClaw may become a more popular choice for developers, especially those looking for an alternative to more general frameworks.

Future perspectives and product evolution

If ClawMetry Cloud continues on its trajectory, we can expect several natural extensions of the product. First, support for other frameworks — while specialization in OpenClaw is currently an advantage, in the long term the team will want to expand its user base. Second, more advanced analytical features — for example, machine learning for automatic anomaly detection or suggesting optimizations.

Other possible directions for development include integration with popular project management and CI/CD tools, which would allow automatic triggering of alerts or actions based on agent metrics. It's also possible that ClawMetry will experiment with generative AI to automatically generate reports or even debugging suggestions.

One thing is certain — observability of AI agents is a problem that will grow in importance as the complexity and number of deployed systems increases. ClawMetry Cloud positions itself as a player that understands this problem deeply and is building a solution that actually solves it. This is not another generic logging tool — it's a dedicated solution for a specific problem, built by people who understand the industry.

More from Tech

Related Articles

After court loss, RFK Jr. gives himself more power over CDC vaccine panel

Apr 6

Steven Spielberg Still Wants to Make a Horror Film ‘Someday’

Apr 6New Jersey has no right to ban Kalshi's prediction market, US appeals court rules

Apr 6