Offload

Foto: Product Hunt AI

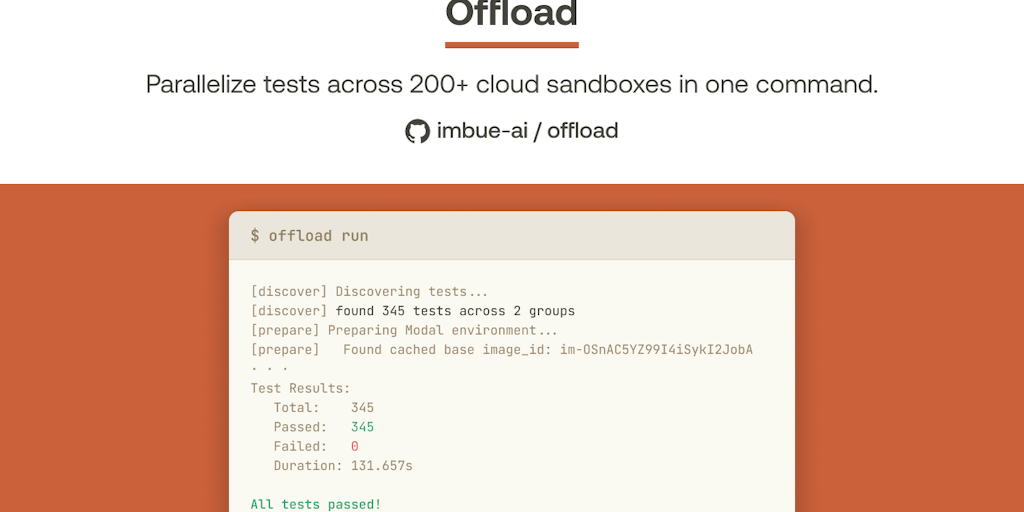

Startup Imbue released Offload – an open-source tool that accelerates testing for AI coding agents. The problem was straightforward: when parallel AI agents work simultaneously, the test suite becomes a bottleneck, with agents waiting in queues or competing for local resources, leading to unstable results. Offload is a CLI written in Rust that distributes tests across over 200 isolated sandboxes in the cloud. A single TOML configuration is all that's needed – no need to rewrite tests. The results are impressive: on a Playwright test suite, execution time was reduced from 12 minutes to 2 minutes, at a cost of just 8 cents per run. This is the third tool in Imbue's portfolio, which focuses on building AI "loyal to the user." The solution addresses a specific pain point for developers working with AI agents – long wait times for test results can drastically slow down iterations. For teams heavily using code automation, this could be a significant productivity accelerator.

Waiting for test results is one of those nagging problems in software development that seems trivial until you work with AI agents. Then suddenly those ten minutes per test are no longer just frustration — they're a completely blocked workflow where the agent sits idle waiting for a system response. Startup Imbue just noticed this problem and decided to do something about it. Today it launched Offload — a tool that promises to change how AI agents run tests by providing parallel access to over 200 isolated cloud sandboxes.

This is not another test management app. Offload is an answer to a specific problem that emerges when you work with autonomous AI systems — a problem that traditional testing tools simply weren't designed to solve. Before we dive into the details, it's worth understanding why this company is even tackling this and what it changes in practice.

Why AI agents need a different approach to testing

The traditional developer workflow is: fix code, run tests, wait for results. If tests take ten minutes, you switch to something else. If they take two minutes, you wait. If they take half an hour, you go get coffee. That's normal. But an AI agent? An agent can't switch. An agent won't go get coffee.

Read also

When an autonomous system has to wait for test results to make the next decision, every second of delay is a multiplier on the entire operation. If an agent wants to try ten different approaches to a problem, and each test takes 12 minutes, that's an hour and 20 minutes of waiting. During that time the agent can't do anything. It can't learn, it can't experiment, it can't iterate. It just sits.

Imbue watched exactly this scenario — coding agents waiting on integration tests while they could be working on something else. The problem isn't new, but AI agents change the scale of the problem. In the traditional world developers are counted in dozens or hundreds per company. Agents can operate at scales where the number of concurrent processes increases dramatically.

Offload: solution architecture

Instead of building another web interface or SaaS with a subscription, Imbue went in a direction that makes sense to me: an open command-line tool written in Rust. This is not by accident. Rust is a language known to infrastructure engineers, and CLI is a tool that agents can invoke directly without additional abstraction layers.

Offload works simply: instead of running a test suite locally or on a single server, it distributes it across over 200 isolated cloud sandboxes. Each test or group of tests goes to a separate environment. No queuing, no competition for local resources, no flaky tests caused by lack of RAM or disk space. Each test gets a clean, dedicated instance.

Configuration is one TOML file — no rewriting tests, no refactoring, no changes to your CI/CD pipeline. Agents can invoke Offload exactly as they would invoke a local test runner. To the system it's transparent — nothing changes on the agent's side, but under the hood instead of waiting on a local disk, the test goes to the cloud and returns a result.

The results speak for themselves. On Imbue's Playwright test suite — a set of tests that traditionally took 12 minutes — the time dropped to 2 minutes. Cost? Eight cents per run. This isn't just an acceleration, it's a change of order of magnitude in efficiency.

Parallelism as a fundamental shift

The key word here is parallelism. Traditional testing tools try to parallelize tests on a local machine — you can run a few processes at once, but you're limited by CPU, memory, and disk. Offload has no such limitations. If you have 200 tests, you can run 200 tests simultaneously, each on its own machine in the cloud.

This is a fundamental shift in how we think about testing. Instead of optimizing tests to be faster, you optimize the workflow to be more parallel. Instead of waiting on a sequence, you wait on the slowest test in the group. In practice this means that even if one test takes 5 minutes and others take 30 seconds, the whole thing takes 5 minutes, not 5.5 minutes.

For AI agents this changes the economics of decision-making. An agent can experiment faster because it doesn't wait on long tests. It can iterate through more variants in the same time. This can translate to better code quality because the agent has more time to explore solutions.

Openness as a business strategy

Offload is open-source. This is not by accident — Imbue clearly bets on a collaborative development model. Code on GitHub, ability to fork, ability to modify. For a startup this is risky, but for the ecosystem it's a signal that Imbue thinks long-term about how agents will operate.

Openness also has practical significance. Developers can see how Offload works under the hood, can propose improvements, can adapt the tool to their specific needs. In the world of AI agents, where the ecosystem is still forming, this approach makes sense. Imbue is not trying to be the only provider — it's trying to be part of the infrastructure.

This also sets Imbue apart from most AI startups. Instead of locking up technology and selling access, they share tools. Instead of building a proprietary platform, they build components that others can integrate. This is a long-term game — building a reputation as a company that understands what developers and AI agents need.

Cloud economics and cost scaling

Eight cents per full test run is a number worth analyzing. For a single developer that's peanuts. But for a company running hundreds of tests daily, it can add up. The question is: does the speed justify the cost?

The answer depends on context. If you have an agent experimenting with code, every cent saved on testing is time the agent can spend on more experiments. If an agent can run 60 iterations daily instead of 5 because tests are faster, the economics change. Testing costs rise, but the value generated by the agent rises faster.

Imbue clearly calculated this math. They talk about $0.08 per run — this is transparent, this is measurable. Not "you pay for access", not "subscription depends on number of tests" — this is a concrete price for a concrete result. This is an approach I like because it allows for precise budget planning.

In Poland, where IT infrastructure costs are typically lower than in the US, such a service could be particularly attractive. Polish companies working with AI, agents, or test automation can afford to experiment with such a tool without huge capital expenditures.

Integration with existing ecosystem

Offload doesn't try to be an entire CI/CD system. It's a tool you integrate into your existing pipeline. You can have GitHub Actions, GitLab CI, or anything else — Offload is just a runner that you swap in for your local test command. This minimizes adoption friction.

For Polish teams that might use different tools — some older, some newer — this flexibility matters. You don't need to refactor your entire infrastructure to use Offload. You add it where it makes sense, and that's it.

The thing is, Imbue understands the real world of development. It knows that not every team has a clean, modernized tech stack. It knows that older projects have older tools. Instead of requiring full transformation, Offload comes in as a tool that works with what you already have.

The future of agents and testing infrastructure

Offload is a symptom of a larger trend. As AI agents become more autonomous and more productive, infrastructure must adapt. We can't test agents the way we test code written by humans. Agents must test faster, must iterate faster, must have access to resources that allow parallel experimentation.

Imbue, as a company, clearly positions itself in the role of building this infrastructure. This is their third launch — which means they're building a portfolio of tools, not a single product. Offload is part of a larger vision where AI agents have access to tools that allow them to work efficiently.

In a five-year perspective, tools like Offload could become standard. Just as every project today has CI/CD, tomorrow every project with AI agents will have a parallel test runner in the cloud. Imbue is trying to be that standard — or at least one of the main players in this space.

```More from Tech

Related Articles

After court loss, RFK Jr. gives himself more power over CDC vaccine panel

Apr 6

Steven Spielberg Still Wants to Make a Horror Film ‘Someday’

Apr 6New Jersey has no right to ban Kalshi's prediction market, US appeals court rules

Apr 6