Unsloth Studio

Foto: Product Hunt AI

Unsloth is a tool that allows running artificial intelligence models directly on your own hardware, without needing to use cloud services. The platform is gaining growing interest among users seeking independence from major AI providers. The solution addresses growing concerns about data privacy and costs associated with cloud processing. With Unsloth, users can work with AI models locally, which means full control over information and potential savings on online service subscriptions. The product has a high 5.0 rating on Product Hunt, although the number of reviews is still limited. The community has 362 followers, suggesting an early stage of adoption, but with clear growth potential. For programmers and companies processing sensitive data, it is a particularly attractive alternative. Unsloth opens the way to a more decentralized AI ecosystem, where the performance of local systems becomes competitive with cloud solutions.

Unsloth is a tool that transforms the fundamentals of how we work with AI models on our own computers. In an era when technology giants like OpenAI or Google offer access to advanced models through the cloud, the emergence of a solution enabling the running of powerful models locally represents a true breakthrough. The platform received an excellent 5.0 rating on Product Hunt, which shows that the community of developers and AI enthusiasts recognizes the real value of this approach. This is not just an ordinary application — it is a paradigm shift in access to artificial intelligence.

Why is this change so important? The reasons are both practical and ideological. First, running AI models locally means full control over data — it does not go to third-party servers, which is crucial for companies dealing with sensitive data, financial institutions, or organizations taking responsibility for privacy. Second, it eliminates dependence on internet access and subscription costs, which can quickly add up with intensive use. Third, it opens possibilities for developers working in countries with limited access to cloud services or with network bandwidth problems.

Unsloth Studio appears at a moment when interest in local AI is exploding. More and more people realize that they do not need to be dependent on APIs or cloud services to work with the latest language models. The question is: does Unsloth really deliver what it promises, and how does it fit into the broader ecosystem of tools for local AI?

Read also

What is Unsloth and how does it work?

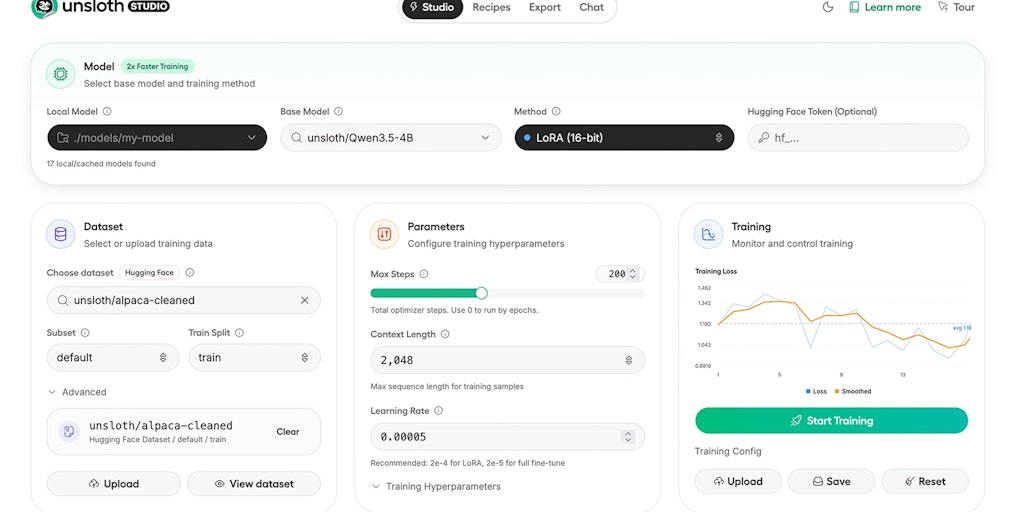

Unsloth is a platform optimized for running and training large language models (LLMs) directly on user hardware. The name is significant — "unsloth" means "to speed up," which accurately reflects the idea behind the project. The goal is to make AI models work faster and more efficiently on local hardware, instead of waiting for responses from the cloud or dealing with network delays.

The basic idea is performance optimization. Traditionally, running large AI models requires enormous computing resources — high-power GPUs, large amounts of RAM, and fast SSDs. Unsloth addresses this problem through advanced optimization techniques that allow running models on more modest hardware without drastic loss of performance. This is a technical achievement that has practical consequences for thousands of developers.

The platform supports popular open-source models such as Llama 2, Mistral, or Qwen. Users can choose between different model sizes — from lighter 7B parameter versions that run on average laptops to more advanced 70B models that require more powerful GPUs but are significantly more capable. This flexibility is key because it allows everyone to find a model suited to their hardware and needs.

Performance optimization — where Unsloth stands out

The technical core of Unsloth lies in several key optimizations. First, model quantization — a technique that reduces model size by reducing the precision of the numbers it consists of. Instead of storing neural network weights in 32-bit floating-point numbers, Unsloth can use 8-bit or even 4-bit representations. For most applications, the difference in answer quality is minimal, and memory savings are dramatic.

Second, optimizations at the GPU kernel level. Unsloth is written with the goal of maximizing the capabilities of modern graphics processors, particularly NVIDIA cards. The code is optimized for CUDA — NVIDIA's computing platform — which means models run much faster than with generic implementations. This is not a magic trick, but consistent work on every aspect of the code.

Third, efficient training through techniques such as LoRA (Low-Rank Adaptation). Instead of training all model parameters from scratch — which would be computationally extremely expensive — LoRA allows model fine-tuning by adding small, low-rank layers. This means you can adapt an advanced model to specialized tasks at a fraction of the computational cost of traditional training.

These optimizations are not new — they have been known in the industry for several years. However, Unsloth integrates them into a coherent, easy-to-use package, which represents significant added value. Instead of independently patching various libraries and solutions, the user has a ready-made set of tools.

Practical applications for Polish creators and companies

In Poland, the AI ecosystem is still developing but dynamic. Unsloth opens concrete opportunities for various groups. AI startups can use the platform to prototype solutions without huge investments in cloud infrastructure. Instead of paying OpenAI for each API request, they can run their own model locally and experiment at low cost.

For creative agencies engaged in content creation, Unsloth opens the possibility of building internal tools for generating text, images, or code without dependence on external services. This is particularly important when working with confidential client data — local models guarantee that no data leaves the company's infrastructure.

Researchers and academics can use Unsloth for experiments with new model architectures without needing access to expensive computing hardware. Polish universities, which have limited budgets for AI research, can use their existing GPUs much more efficiently.

For individual developers and hobbyists, Unsloth democratizes access to AI. You don't need to be an employee of a large corporation to work with the latest language models. All you need is a laptop with a decent GPU and a desire to learn.

Limitations and challenges you need to know

However, before installing Unsloth, it's worth understanding the limitations of this approach. First, hardware requirements. Although Unsloth optimizes performance, running LLM models still requires decent GPU. Laptops with integrated graphics cards may have problems — even optimized 7B models can run slowly on weaker hardware. Users without a dedicated GPU will be disappointed.

Second, learning curve. Unsloth is a tool for technical users — programmers and engineers. If you've never worked with Python, CUDA, or transformer models, implementing Unsloth will be frustrating. The documentation is good, but this is not a "click and run" type tool.

Third, model limitations. Even the best available open-source models, such as Mistral or Llama 2, do not match the performance of the latest models from OpenAI or Google. GPT-4 and Claude 3 are simply more capable. If you need absolutely the best performance, local models may be insufficient.

Fourth, ongoing updates and support. The open-source AI ecosystem is changing rapidly. Unsloth must constantly update to support new models and optimizations. The question is whether the team behind this project has the resources to keep pace with industry development.

Unsloth in the context of competition

Unsloth is not the only player in the local AI market. Ollama is a popular alternative that also allows running models locally. Ollama is more beginner-friendly — it has a simpler interface and does not require deep programming skills. However, Unsloth is more advanced and offers better optimizations for advanced users.

LM Studio is another alternative with a GUI that also simplifies the process of running models. For users who prefer a graphical interface over the command line, LM Studio may be more attractive. However, again, when it comes to pure performance and optimizations, Unsloth offers more.

Comparing Unsloth to commercial cloud services, the equation is more complex. OpenAI API or Anthropic Claude API offer unmatched performance and reliability, but at a cost. For projects where cost is a critical factor or where data privacy is absolutely necessary, Unsloth wins. For projects requiring the best possible answer quality, the cloud remains the better choice.

The future of local AI and Unsloth's role

The trend toward local AI will strengthen. Several factors drive this development: growing concerns about data privacy, regulations such as GDPR in Europe, the desire to reduce operating costs, and increasingly better optimizations that make models run faster on weaker hardware.

Unsloth positions itself as a key tool in this ecosystem. If the team behind the project maintains the pace of innovation and supports new models and optimizations, it could become the standard tool for developers working with local AI. The 5.0 rating on Product Hunt suggests that the community already recognizes this.

However, success is not guaranteed. Competition in the open-source AI space is fierce, and large companies like Meta or Microsoft are also investing in local AI tools. Unsloth must find its niche — and it seems that niche is advanced users looking for maximum performance and optimization.

Practical steps to get started with Unsloth

If you're interested in trying Unsloth, here's what you should know. First, check your hardware. Ideal is an NVIDIA GPU with at least 8 GB of VRAM. AMD GPU may work, but support is weaker. CPUs can work, but will be much slower.

Second, install required dependencies. You'll need Python, NVIDIA's CUDA toolkit, and libraries like PyTorch. The installation process is well documented, but requires some technical knowledge.

Third, choose a model. Start with something light, like Mistral 7B, to see how it works on your hardware. Once you get familiar with the platform, you can experiment with larger models.

Fourth, fine-tune the model. This is where Unsloth really stands out. Use LoRA to adapt the model to your specialized tasks. Unsloth documentation contains great examples of how to do this.

Unsloth Studio represents an important moment in the democratization of AI. This tool shows that you don't need to be a giant corporation to work with cutting-edge models. For Polish developers, companies, and scientists, this opens new possibilities — both technical and business. Will Unsloth become a standard tool? That depends on whether the team can maintain the pace of innovation and support a growing community of users.

More from Tech

Related Articles

After court loss, RFK Jr. gives himself more power over CDC vaccine panel

Apr 6

Steven Spielberg Still Wants to Make a Horror Film ‘Someday’

Apr 6New Jersey has no right to ban Kalshi's prediction market, US appeals court rules

Apr 6