We Found Eight Attack Vectors Inside AWS Bedrock. Here's What Attackers Can Do with Them

Foto: The Hacker News

Eight critical security vulnerabilities discovered in the AWS Bedrock infrastructure shed new light on the susceptibility of AI systems to Prompt Injection and Remote Code Execution (RCE) attacks. Researchers from the Orca Security team demonstrated that flaws in the Amazon platform's security could have allowed cybercriminals to break container isolation and gain unauthorized access to language models and other users' data. By exploiting specific attack vectors within Bedrock, attackers were able to manipulate AI-generated outputs and even steal confidential authentication keys. For the global community of developers and enterprises utilizing cloud services, this necessitates an immediate revision of security policies. Although AWS has already patched the identified bugs, the incident underscores that traditional firewalls are insufficient in the era of generative AI. The practical implications for users are clear: a transition to the Zero Trust Network Access (ZTNA) model is essential, as it eliminates lateral movement within the network and connects users directly to applications instead of granting access to entire infrastructure segments. Companies must understand that AI model security is not just a matter of content filtering, but primarily rigorous resource separation at the system level. Effective protection against modern threats now requires a full modernization of remote access and the total elimination of default trust within cloud computing ecosystems.

In the corporate world, where the pace of generative artificial intelligence implementation determines market advantage, AWS Bedrock has become the foundation for thousands of enterprises. This platform promises something that a pure language model does not: an ecosystem where Foundation Models (FM) are directly plugged into the company's bloodstream. However, this same connectivity that allows AI agents to search Salesforce, trigger AWS Lambda functions, or index resources on SharePoint, opens doors that many security architects have not yet managed to close. The latest analyses point to the existence of as many as eight attack vectors that can turn a helpful assistant into a professional tool for data exfiltration.

The problem lies not in the LLM technology itself, but in the architecture of permissions and integration. When we give a model access to corporate databases, it ceases to be just a text generator — it becomes a user with specific privileges. If an attacker manages to manipulate the instructions reaching AWS Bedrock, they de facto gain control over the processes that this platform handles. The scale of risk is enormous, as it concerns not only information leaks but also unauthorized execution of actions within the cloud infrastructure.

The Trap of Excessive AI Agent Permissions

Agents in AWS Bedrock operate based on mechanisms designed to facilitate work for developers, but often at the expense of rigorous access control. The first and most obvious attack vector is the use of Prompt Injection to overwrite system protection barriers. An attacker, by sending a crafted query, can force the agent to ignore its original role and command it to perform a query to the Salesforce database that falls outside the scope of standard operations. This is a classic example of privilege escalation, where AI becomes an unwitting intermediary in digital identity theft.

Read also

Another critical flashpoint is the integration with AWS Lambda. Many implementations assume that an AI agent needs broad permissions to invoke functions to ensure smooth operation. However, if the Lambda function does not have its own granular security measures, gaining control over the agent's session allows an attacker to run code inside the company's secure perimeter. In this way, an attacker can modify records, delete data, or worse, create new cloud resources at the victim's expense, bypassing traditional intrusion detection systems (IDS).

Knowledge Poisoning and Data Source Manipulation

Attack vectors in AWS Bedrock also include manipulation of the Retrieval-Augmented Generation (RAG) process. These systems rely on retrieving information from external sources, such as SharePoint, to provide a precise answer. An attacker who has access to even one document within the organization can insert malicious instructions into it. When the AI agent indexes this document, the "poisoned" knowledge becomes part of its decision-making logic. This is a form of Indirect Prompt Injection, which is exceptionally difficult to detect because it does not come directly from the end user.

- Data exfiltration via side channels: An agent can be forced to send sensitive data to external servers under the guise of error logging or API requests.

- Model Hijacking: Using specific prompts to change the behavior of the Foundation Model to generate biased or harmful content.

- Abuse in Knowledge Bases: Injecting false information into knowledge bases, leading to incorrect business decisions made by automated systems.

- User session hijacking: Exploiting vulnerabilities in the platform's authentication mechanisms to intercept access tokens.

It is worth noting that AWS Bedrock operates at the intersection of many services. Every point of contact is a potential vulnerability. If the mechanism connecting the agent to the document repository does not apply the Zero Trust principle, any person with the right to edit files on the company intranet becomes a potential administrator of the AI system. This drastically expands the attack surface, shifting the burden of responsibility from IT teams to every employee with content creation privileges.

From VPN to Zero Trust Network Access in the AI Era

Traditional methods of securing access, based on VPN, are proving insufficient in the face of threats originating from within AI ecosystems. Modern security architecture must evolve toward Zero Trust Network Access (ZTNA). Instead of protecting the network perimeter, we must protect specific applications and resources, eliminating the possibility of lateral movement. In the context of AWS Bedrock, this means that an AI agent should not have default trust in any data source, and every action it takes must be verified against the permissions of the user on whose behalf it is acting.

Access modernization is not just a replacement of tools, but a paradigm shift. Instead of connecting a user to the entire network, ZTNA connects them directly to the application. The same principle must be applied to AI agents. Every request to Lambda or Salesforce should have a unique security context. Without this, AWS Bedrock will remain a powerful but risky tool that can be turned against an organization by a determined attacker exploiting one of the eight discovered attack vectors.

"Connectivity is the greatest strength of the Bedrock platform, but without rigorous granular control, it becomes its greatest weakness. We cannot trust AI agents just because they operate inside our cloud."

The foundation of a secure future with generative AI is understanding that these models are dynamic and unpredictable. Companies must stop treating AWS Bedrock as a black box and start viewing it as another element of critical infrastructure that requires real-time monitoring and micro-segmentation level isolation. Only by eliminating lateral movement and directly linking identities to actions can the potential of Amazon Bedrock be fully utilized without compromising the integrity of the enterprise.

In the coming months, a key challenge for Chief Information Security Officers (CISOs) will be auditing existing AI deployments for the aforementioned eight vectors. Securing prompts is just the tip of the iceberg; the real battle will be fought at the level of system integrations and IAM permissions. Organizations that most quickly implement ZTNA principles in their AI ecosystems will not only avoid spectacular leaks but will build a foundation for much more advanced and autonomous systems that are resistant to manipulation attempts from outside and inside the network.

More from Security

$285 Million Drift Hack Traced to Six-Month DPRK Social Engineering Operation

36 Malicious npm Packages Exploited Redis, PostgreSQL to Deploy Persistent Implants

Fortinet Patches Actively Exploited CVE-2026-35616 in FortiClient EMS

China-Linked TA416 Targets European Governments with PlugX and OAuth-Based Phishing

Related Articles

How LiteLLM Turned Developer Machines Into Credential Vaults for Attackers

Apr 6

Qilin and Warlock Ransomware Use Vulnerable Drivers to Disable 300+ EDR Tools

Apr 6

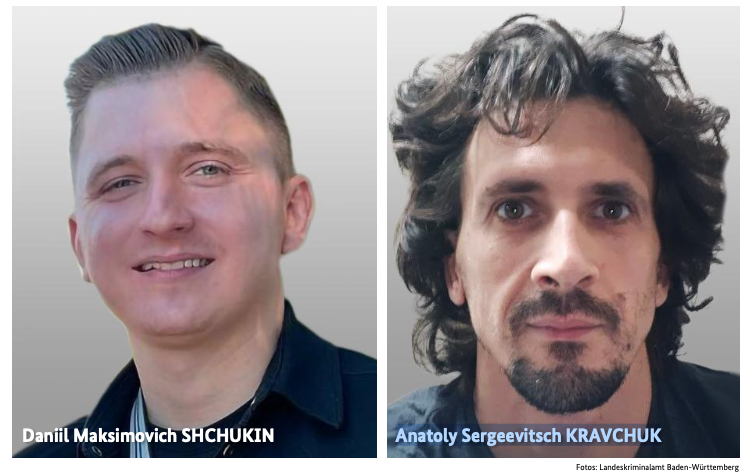

BKA Identifies REvil Leaders Behind 130 German Ransomware Attacks

Apr 6