Billy.sh

Foto: Product Hunt AI

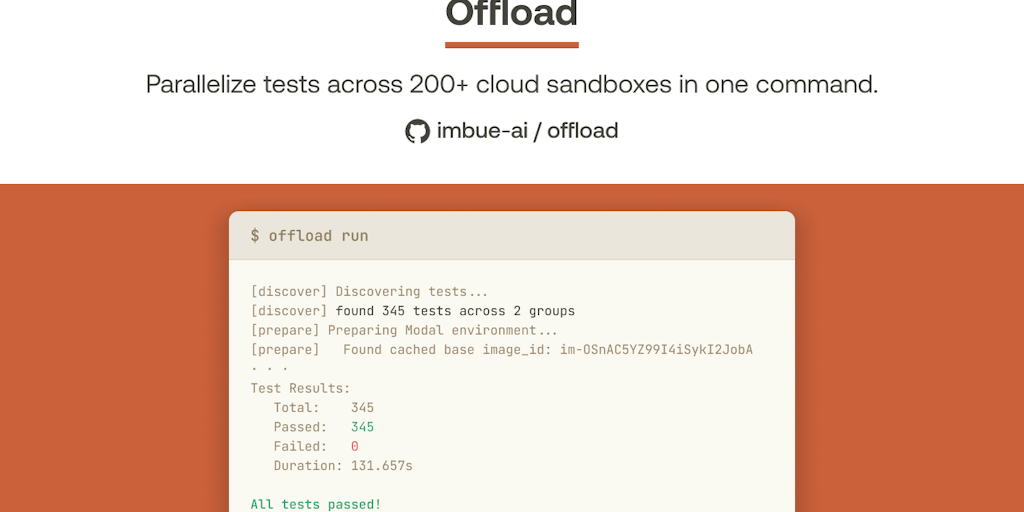

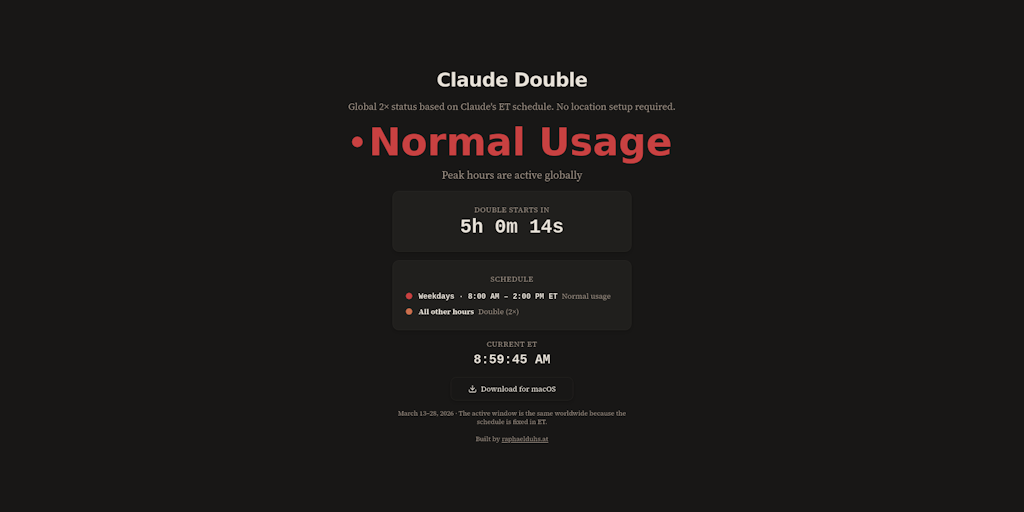

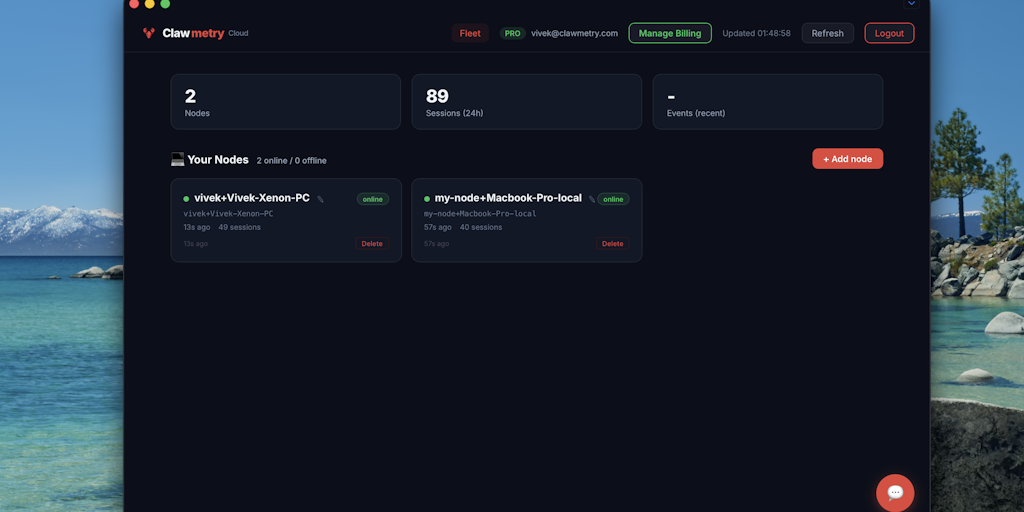

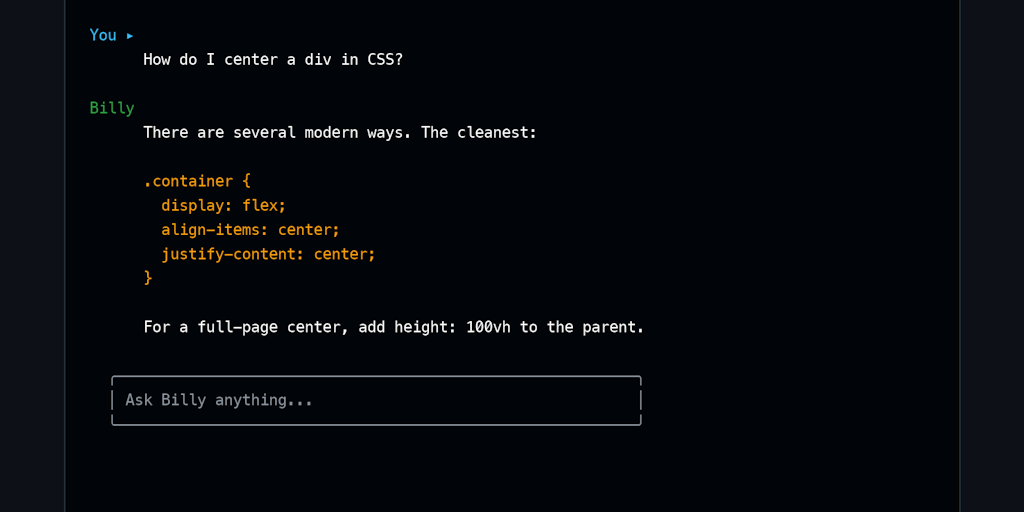

Billy.sh is an AI coding assistant that works directly in the terminal, powered by Ollama. Unlike cloud-based solutions such as GitHub Copilot, the tool runs entirely on the user's local computer, which means complete code privacy and the ability to work offline. A key advantage of Billy.sh is context memory between sessions — the assistant remembers conversation history and can resume previous chats. Additionally, it can suggest or execute shell commands, but only with user consent. The manufacturer also offers a bundled version with Ollama included, which significantly speeds up configuration. The tool is available in a free version, with Pro upgrade starting at $19 as a one-time payment. For programmers working with sensitive code or preferring independence from cloud services, it is a solid alternative. Billy.sh is entering the market at a time when more and more developers are looking for local AI solutions that do not send their work to external servers.

Terminal has recently become a battleground for AI assistants. Every major player — from OpenAI with Copilot CLI to GitHub Copilot — is trying to squeeze into the tool that developers have lived with for decades. But Billy.sh approaches this differently. Instead of sending code to the cloud, where it can be processed, stored, and analyzed, Billy keeps everything locally. This is not a minor distinction — it is a fundamental shift in approach to security, privacy, and developer independence.

The new assistant, which just appeared on Product Hunt, uses Ollama — a platform for running large language models on your own hardware. Billy.sh is a terminal-native AI coding assistant that works entirely on your machine, without needing to connect to any corporation's servers. Sounds like a solution for paranoids? Maybe. But it's also a solution for professionals working with sensitive code, for companies that don't want to share their intellectual property, and for anyone who simply prefers not to be dependent on internet availability or API limits.

The question is: can the local approach compete with the marketing machine and resources of tech giants? Is Billy.sh truly a breakthrough, or just a niche tool for open source enthusiasts?

Read also

Privacy as the main currency in the age of the cloud

At a time when GitHub Copilot and other cloud-based assistants dominate the market, Billy.sh set itself a clear goal: your code never leaves your computer. This is not an abstract promise — it is system architecture. When you use Billy.sh, the AI model runs locally through Ollama, which means all queries, all code fragments, all variables and business logic remain in your home directory.

For many developers, this is a crucial matter. Working on projects for clients, startups, or your own products, no one wants their code transmitted to Microsoft's, OpenAI's, or any other provider's servers. This is not about paranoia — it's a real security problem. Every cloud connection is a potential attack vector, every data transfer is exposure to leaks, every log is a trace that can be exploited.

Billy.sh solves this problem elegantly. The AI model it has is typically Llama, Mistral, or other open source models available through Ollama. They are smaller than GPT-4 or Claude, but for most coding tasks — from code completion to error explanation — they are entirely sufficient. And most importantly: they work offline, without any internet connections, if you want them to.

Ollama as foundation: simplicity and control

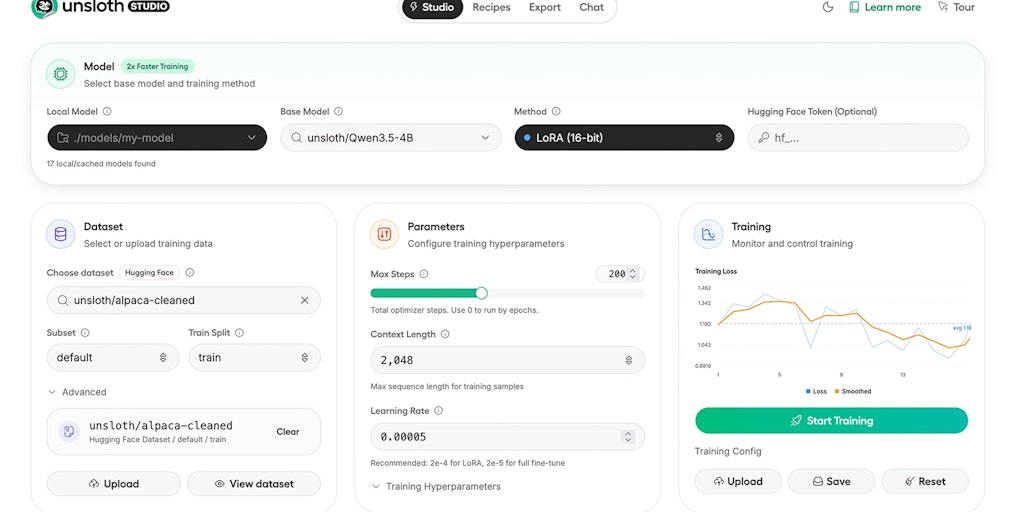

To understand why Billy.sh has potential, you must first understand Ollama. It's a tool that appeared relatively recently, but gained enormous popularity among developers who want to experiment with AI models without having to configure GPU, CUDA, or other complexities. Ollama abstracts all these technical details and simply lets you download a model and start using it.

Billy.sh builds on this simplicity. Instead of forcing the user to manually install Ollama, configure models, and write scripts, Billy offers a bundled build with Ollama already built in. You download Billy, run the installer, and you're done — you have a working AI assistant in the terminal without any additional steps. This is key to adoption. Most developers don't want to spend hours on configuration, they just want to start working.

The control that Billy.sh gives the user is its second strength. You can choose which model you want to run. Want a smaller, faster model for a laptop with limited power? Choose Mistral 7B. Want something more advanced? Install Llama 2 13B. Everything is under your control, and Billy.sh works with any of them.

Features that matter in practice

Billy.sh is not just an assistant that answers questions. It's a tool built with the real developer workflow in mind. The first key feature is remembering context between sessions. You close the terminal, return to the project the next day, and Billy knows what you were working on. This seems simple, but in practice it changes everything. You don't have to remind yourself what the project context is, what the constraints are, what the style guide is — Billy remembers all of it.

The second feature is the ability to suggest and run shell commands. This is dangerous if done wrong — the last thing you want is for AI to run commands on your computer without your permission. Billy.sh solves this by requiring explicit consent. AI suggests a command, you review it, and only after your acceptance is it executed. This is a balance between productivity and security.

The third feature is resuming chat history. If you interrupt your work with Billy during a session, you can return to the previous conversation. This is not just remembering the last session — it's a full conversation history that you can browse, analyze, and build upon.

- System-level privacy — no code leaves your computer

- Offline work — you don't need the internet to use the assistant

- Context remembering — Billy knows what you're working on and the project context

- Command suggestions — with requirement for explicit consent before execution

- Chat history — resuming previous conversations and building on them

- Bundled Ollama — one-step installation without additional configuration

Pricing model: free plus optional premium

Billy.sh offers a freemium model that is fair and transparent. The free version gives you access to all basic features — you can run open source models, work offline, use context remembering. There are no limits on the number of queries, no watermarks, no pressure to upgrade.

If you want more, Pro upgrade costs $19 one-time. This is a price that many developers will pay without hesitation — it's less than one full coffee in a decent café. For this amount, you get access to more advanced features, potentially better support, maybe integrations with other tools. But even without it, the free version is fully functional.

This approach to pricing is very different from the subscription model preferred by GitHub Copilot or OpenAI. There you pay every month, always. Here you pay once, if you want. This is psychologically different and more attractive to many users.

Where Billy.sh really shines — and where it has limitations

Billy.sh is excellent for a few specific scenarios. If you work with sensitive code — financial, medical, military — local privacy is non-negotiable. If you work in an environment without internet access or with very limited access, Billy.sh is the only practical solution. If you don't want to be dependent on API availability or OpenAI limits, Billy.sh gives you independence.

But there's also the other side of the coin. The open source models that Billy.sh has are smaller and less advanced than GPT-4 or Claude 3. For simple tasks — code completion, error explanation, refactoring — this is not a problem. But if you need deep analysis, complex architectures, or advanced logic, open source models may not work.

The second limitation is hardware performance. If you have a laptop with 8GB RAM and a four-core processor, running larger models will be slow. Billy.sh works, but not as fast as you'd like. This is a natural trade-off between privacy and performance — you need to have powerful enough hardware to run models locally.

The third limitation is the ecosystem. GitHub Copilot integrates deeply with VS Code, JetBrains IDEs, and other editors. Billy.sh is a terminal-native tool — it works perfectly in the terminal, but if you spend most of your time in an IDE, you have to switch between tools.

Competition and market position

The AI coding assistant market is full. GitHub Copilot has a huge market share thanks to VS Code integration and Microsoft support. OpenAI has ChatGPT Plus with Code Interpreter. Anthropic has Claude with excellent code support. But they all work in the cloud, they all require an account, they all send your code to their servers.

Billy.sh has a different niche. It doesn't try to compete on model power or speed. It competes on privacy, independence, and control. This is a segment of the market that is growing — more and more companies, especially in finance, healthcare, and security, are looking for solutions that don't send data to the cloud.

But there are also a few other tools in this niche. Continue.dev is an open source IDE extension that also uses local models. Codeium has a self-hosted option. Tabnine offers local deployment. Billy.sh must stand out — and it does through simplicity, bundled Ollama, and focus on terminal-native experience.

For Polish developers — practical significance

In Poland, awareness of privacy and data security issues is growing. GDPR is no longer just a word — it's a reality that companies must deal with. If you work for a Polish company that processes personal data, sending code to the cloud can be problematic from a compliance perspective. Billy.sh solves this problem.

Additionally, the Polish IT market has many small and medium-sized companies that cannot afford GitHub Copilot Pro ($20/month) or other subscriptions. The free version of Billy.sh with an optional one-time $19 upgrade is much more affordable. This can be a real alternative for Polish startups and freelancers.

Third, Poland has a strong open source community and enthusiasts of local solutions. Billy.sh, being based on open source Ollama and open source models, can find a natural user base there.

The future: Billy.sh in the context of AI evolution

Billy.sh appears at a time when discussion about AI privacy is becoming increasingly loud. European regulations, user awareness, enterprise concerns — all of this makes local solutions gain importance. This is not a temporary trend, it is a fundamental change in how we think about AI and data.

If Billy.sh develops well, it could be the beginning of a larger trend — a shift from cloud-first AI assistants to hybrid or local-first solutions. This doesn't mean GitHub Copilot or ChatGPT will disappear — they will continue to dominate for users who don't care about privacy. But more and more options will emerge for those who do care.

Ultimately, Billy.sh is a symbol of something important: resistance to centralization, striving for independence, and desire for control over your own tools. It may not be the most advanced AI assistant, but it may be exactly the one you need.